Not that I know of. It happens after 4 hours of use when turning off and you can arrange a one hour cycle at your chosen time or start one directly from the menu. There's also a big 4 hour comp cycle after 1000 hours of use, I've had two of those.On the subject, is there a way to have the OLED automatically do Pixel Refresher every time you turn it on?

-

Ever wanted an RSS feed of all your favorite gaming news sites? Go check out our new Gaming Headlines feed! Read more about it here.

-

We have made minor adjustments to how the search bar works on ResetEra. You can read about the changes here.

Television Displays and Technology Thread: The ERA of OLED is Now

- Thread starter Jeremiah

- Start date

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

- Status

- Not open for further replies.

Use PC mode for SDR gaming, also you don't need to set colour gamut to normal, leave on wide, W50 is what you'll want, if you like warm 2 for films etc.

wide is not accurate

Think we have crossed wires, I was confused by your post:

"But HDR game mode can be changed to wide or normal. Has anyone switched it to normal and then set color temp to W40+ to be more accurate?"

You have no reason to change colour gamut to normal, wide is what you need for HDR gaming using 'game' .

SDR game is the preset with locked to wide colour gamut.

Everywhere I read it's all about cumulative use. Just about anything static enough to cause retention could hypothetically cause burn-in depending on your brightness settings and cumulated hours. I'm unfamiliar with your specific logos, but if they're white then they'll likely cause not too much issue, especially if they're transparent. It's the strong reds and yellows that cause the most issues, those colours combined with Vivid level brightness settings on your TV is almost a self-fulfilling prophecy for burn-in.

My main concerns with the idea of cumulative hours is that it makes burn-in sound inevitable. I'd hoped that the compensation cycles would help age the more abused pixels evenly allowing breaks to help reset the potential burn-in source from posing a threat with cumulated use. But most seem to say that a break don't help, if it's causing retention then a matter of hours of use will eventually lead to burn-in. Which kinda sounds terrifying to me. I mean, my OLED is almost a year and a half old, I've babied it into young youth with no signs of burn-in, I'm not wanting to upgrade for another potential 3-5 years, and I'm pretty happy with the uniformity and love the now rare 3D support. It'd kill me if I got some nasty burn-in.

Going on the AV forums and reading about the horrors of burn-in is damn terrifying for such a price-y product, especially with no guarantees of warranty. Damn wish this wasn't the inherent flaw with this otherwise beautiful technology man, it's distressing.

This concerns me as well. As much as I love the picture quality of my B7 I'll probably take it back and go with LED. If I wasn't a gamer I'd probably keep it.

No one actually knowsAny chance the 7 series is getting the HDR brightness reversal the 6's are getting?

Yeah I am afraid to jump on OLED for the time being because I'm going to play D2 on it 99% of the time. I'm getting mixed statements on burn in so it's not concrete. But I have to make the decision soon so it's either an OLED or an X900F.

I'd do an X900F if you're even the least bit concernedYeah I am afraid to jump on OLED for the time being because I'm going to play D2 on it 99% of the time. I'm getting mixed statements on burn in so it's not concrete. But I have to make the decision soon so it's either an OLED or an X900F.

(but I also have like 150 hrs into D2 which was pretty much all i played at that time and have no burn in or IR on my C7)

I'm curious if OLED light and color settings have anything to do with increasing or decreasing chances of BI.

I was almost set on getting a 75" X900F. Then i saw this video on youtube where it took 10sec just from pressing the button on the remote untill the settings menu opened.

Also when the volume was lowered/increased it was extremely laggy. Looked totally infuriating imho.

Any Sony owners here that can speak for how bad the Android TV interface really is?

Also when the volume was lowered/increased it was extremely laggy. Looked totally infuriating imho.

Any Sony owners here that can speak for how bad the Android TV interface really is?

I'm curious if OLED light and color settings have anything to do with increasing or decreasing chances of BI.

I thought read that if you keep OLED light <60 you'll reduce the risk of burn-in.

Quick question everyone.

I have a Samsung 4K HDR TV.

And yesterday I launched Deus Ex Mankind Divided for the first time in years.

It prompted me that my TV is HDR capable and if I want to switch.

Of course I do.

The image changes but it really just looks brighter and as if someone applied a sharpness filter to the image and turned it up to eleven.

The PS4 main menu looks the same while in game.

Is this normal? It really doesn't look particularly good.

I have a Samsung 4K HDR TV.

And yesterday I launched Deus Ex Mankind Divided for the first time in years.

It prompted me that my TV is HDR capable and if I want to switch.

Of course I do.

The image changes but it really just looks brighter and as if someone applied a sharpness filter to the image and turned it up to eleven.

The PS4 main menu looks the same while in game.

Is this normal? It really doesn't look particularly good.

That absolutely helps, most reports of burn-in I've seen have been from people using a higher OLED light setting.I'm curious if OLED light and color settings have anything to do with increasing or decreasing chances of BI.

Quick question everyone.

I have a Samsung 4K HDR TV.

And yesterday I launched Deus Ex Mankind Divided for the first time in years.

It prompted me that my TV is HDR capable and if I want to switch.

Of course I do.

The image changes but it really just looks brighter and as if someone applied a sharpness filter to the image and turned it up to eleven.

The PS4 main menu looks the same while in game.

Is this normal? It really doesn't look particularly good.

in Deus Ex is kinda borked in HDR

You need to turn the brightness down to 35 to have it look vaguely normal

DV black level over HDMI fix

Just for game mode or across the board ?

all HDMI inputs.

There is nothing this changes with game mode.

This is not the same update the 6 series received.

I was almost set on getting a 75" X900F. Then i saw this video on youtube where it took 10sec just from pressing the button on the remote untill the settings menu opened.

Also when the volume was lowered/increased it was extremely laggy. Looked totally infuriating imho.

Any Sony owners here that can speak for how bad the Android TV interface really is?

Really bad, sometimes doing a reboot helps by holding the power button on the remote for a few seconds.

I was almost set on getting a 75" X900F. Then i saw this video on youtube where it took 10sec just from pressing the button on the remote untill the settings menu opened.

Also when the volume was lowered/increased it was extremely laggy. Looked totally infuriating imho.

Any Sony owners here that can speak for how bad the Android TV interface really is?

I barely set up my 75" X900F yesterday. I went home from work for lunch to set it up, took the whole lunch break just to get the initial setup done, the automatic one that starts when you first turn on the TV, there was an update that I did so that was why. I think it was for Dolby Vision. Anyway, navigating through the setup menu was no problem at all for me. Responsive and quick. Once I actually get it set up and tweak some settings I can record a video and you can see what it's like.

Yeah I am afraid to jump on OLED for the time being because I'm going to play D2 on it 99% of the time. I'm getting mixed statements on burn in so it's not concrete. But I have to make the decision soon so it's either an OLED or an X900F.

This was the exact same debate I was having with myself. I was going to go Sony A8F at 65" or the X900F at 75". Decided on the 900F. I didn't want to worry about burn in, plus I got 10 extra inches for less money than the 65 OLED.

Also, I'm assuming D2 is D2: The Mighty Ducks? It's a great movie, Bombay really steps it up and brings the kids some much needed joy. And he learns a little something along the way. Don't know why you'd have any burn in issues with it though.

If you keep in your mind that an HDR primarily falls within the range of and SDR image, those areas 100nit and above are mostly reserved purely for things like light sources and sun spots. HDR 10 and Dolby Vision use absolute values, so if the content says a specific pixel is 1500nit, then the TV tries to illuminate it to 1000nit.

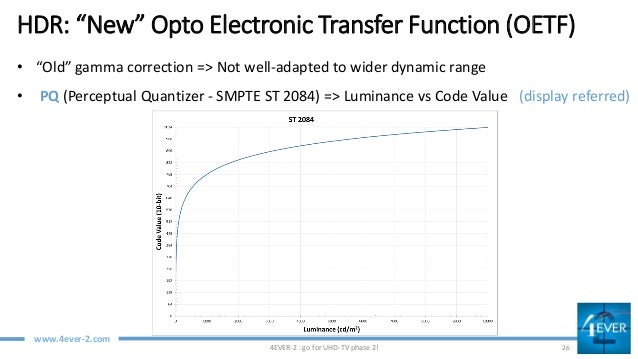

So this graph shows the ST2084 PQ which both HDR10 and Dolby Vision use

on the left you can see the code value (0-1023)

and along the bottom you can see the nit value that each code value is supposed to generate.

In 8bit RGB levels world is the equivalent of 0-255 , 0 being black and 255 being white.

Rather than say 0 is Black and and 1024 is white and the extra data is used inbetween to reduce graduations, roughly half of the data is used to represent black (0) and White at 100nits (code value 520), then ou have the other 521-1023 code values reserved for things that are whiter than 100 nits.

So a piece of paper on a table on an overcast day will reflect back less light than the same piece of paper on a very sunny day, this is what this additional data can be used to display more accurately.

Likewise in SDR land you might use the same white to represent a piece of paper and the sun, which of course we know is nothing like real life.

This distribution of the HDR code values is also why colour banding can still exist, as the reality of it is that there are only twice as many code values there for the bulk of the image, so there are now twice as many bands*

This graph shows what the difference between an SDR and an 10000nit HDR image is

see

As you can see, the bulk of what makes up the picture falls within that 100nit range.

Obviously the problem is if the TV physically can't deliver that brightness, how should it handle it?

The Metadata that is sent alongside HDR content helps the TV to understand how the SDR image would look (it's often created by running the SDR and HDR grades's through software which compares them) , so in a situation where the TV can't display the information it is being presented with, the TV knows how to make that image look correct and can map the tones of brightness down to a level that the TV can display. The goal being to ensure that you make use of the additional data and create an image that has more dynamic range than the SDR version would have had. Extended Dynamic range if you like.

Both HDR10 and DV give this information. The primary difference between the 2 is that DV can give more metadata to make this tone mapping algorithm more granular (frame by frame or shot by shot , HDR10+ will do this too) and DV has a preset set of rules as to how you tone map downwards. With HDR10+ these rules are set by the manufacturer of the TV and may even be unique to the model. Ultimately they are trying to achieve the same thing - interpret data that cannot be displayed in a way that looks right, within the constraints of what the hardware can display.

Dolby Vision is the theatrical standard, because it is more predictable (the TV manufacturer's recommendations are taken out of consideration).

So in the absence of Metadata, DV will simply stop trying to display highlights beyond what the TV can display, something 650nits and 1000nits will display at 650nits, which will result in lost detail.

This becomes less of an issue as the TVs get better and better, you can already get TVs that get close to 2000nit, the chances of major perceivable loss of detail become smaller and smaller.

So in a nutshell : Metadata is only useful when you have a TV that can't hit the nit values of the content. As time goes by, TVs will get better and the need for metadata becomes zeroed.

At which point Dolby will remove the cap on 4000nits and push it up to 10,000, requiring firmware updates / new chips/new TVs...

With videogames, the content is created on the fly, they can tell the game to make sure that the sun never becomes brighter than the maximum capability of the display and everything else within the game that produces or reflects light working relative to this (these are the games with HDR silders). The game operates within a fixed range, set by the user and the TV displays exactly what it is told to display. No metadata is required.

I understand how the PQ EOTF works and DV. I was more curious about gaming. Dolby patent on dynamic metadata and dynamic tone mapping, suggest that tone mapping applies if the target display peak brightness falls below the mastering monitor or exceeds the mastering monitor in peak brightness. Vincent Teoh believed otherwise until he performed his HDR10 vs DV test on high nit displays, proving the benefits of dynamic metadata and dynamic tone mapping. Dolby patent specifically states 1:1 master-target means no tone mapping.

Davinci Resolve, has three peak nit targets available. 600, 1000, and 2000, since a lot of the film content available brightest pixels are below the mastering monitor peak nits, tone mapping still applies or will still get blown out whites and highlights.

That absolutely helps, most reports of burn-in I've seen have been from people using a higher OLED light setting.

The highest OLED light I use is with cinema mode, and game mode, a value of 80. What is considered a "higher" value? 80 is the default for those modes.

Also, just received an OTA update, version 04.71.00

Missed your post from last page. Any feedback regarding the interface is highly appreciated. My biggest fear is that it will feel too sluggish.I barely set up my 75" X900F yesterday. I went home from work for lunch to set it up, took the whole lunch break just to get the initial setup done, the automatic one that starts when you first turn on the TV, there was an update that I did so that was why. I think it was for Dolby Vision. Anyway, navigating through the setup menu was no problem at all for me. Responsive and quick. Once I actually get it set up and tweak some settings I can record a video and you can see what it's like.

I'm pretty confident the tv is amazing in all other aspects.

Thanks. Doesn't feel very premium for a top of the line tv.Really bad, sometimes doing a reboot helps by holding the power button on the remote for a few seconds.

The highest OLED light I use is with cinema mode, and game mode, a value of 80. What is considered a "higher" value? 80 is the default for those modes.

Also, just received an OTA update, version 04.71.00

I'm an idiot who likes to leave the OLED light on 100 on everything.

Should I compromise and have it on 80? Is it well documented that setting it to 100 increases the risk of burn-in?

Anything over 50 is considered pretty extreme by most enthusiasts for SDR content. It really depends on what you're putting your TV through, static game HUDs with vibrant colours absolutely pose a threat at that level. Now keep in mind panel variations, so many factors. But a general rule of thumb is to keep the OLED light on the down low.The highest OLED light I use is with cinema mode, and game mode, a value of 80. What is considered a "higher" value? 80 is the default for those modes.

Also, just received an OTA update, version 04.71.00

I'm an idiot who likes to leave the OLED light on 100 on everything.

Should I compromise and have it on 80? Is it well documented that setting it to 100 increases the risk of burn-in?

You're hugely increasing above black artifacting at 100 too for SDR.

While I'm in here, I think I'll ask something I've been wondering about for a while. In terms of 4K upscaling, the actual TV itself, meaning the X900F, does it. However, I have a Denon X4400H as well and all my HDMIs are going into it. It supposedly has some killer 4K upscaling tech going on. My question is, will my receiver and TV be doing double duty? Do I need to turn the TV's scaling off? Is that even something I can do? I just want to make sure the video is as clean as possible.

I'd be very, very, very surprised if that scaler was able to outperform your Sony's. Esp now that the 900F is on the X1E chip.While I'm in here, I think I'll ask something I've been wondering about for a while. In terms of 4K upscaling, the actual TV itself, meaning the X900F, does it. However, I have a Denon X4400H as well and all my HDMIs are going into it. It supposedly has some killer 4K upscaling tech going on. My question is, will my receiver and TV be doing double duty? Do I need to turn the TV's scaling off? Is that even something I can do? I just want to make sure the video is as clean as possible.

Been watching Punisher and now JJ Season 2 on my Sony 930e with DV but kan I can't say it looks great. The grain truly ruins it. Why do they even use it? It's trash and ruins the picture severely..

I'd be very, very, very surprised if that scaler was able to outperform your Sony's. Esp now that the 900F is on the X1E chip.

Is this something that you turn on and off (either on the AVR or the TV)?

Think we have crossed wires, I was confused by your post:

"But HDR game mode can be changed to wide or normal. Has anyone switched it to normal and then set color temp to W40+ to be more accurate?"

You have no reason to change colour gamut to normal, wide is what you need for HDR gaming using 'game' .

SDR game is the preset with locked to wide colour gamut.

Correct sdr is locked. But I believe normal is the accurate choice for all hdr content. Lg had to clarify since people thought it should be wide. Wide oversaturates reds and greens.

So setting hdr to normal and w45 should be more accurate if you will.

While I'm in here, I think I'll ask something I've been wondering about for a while. In terms of 4K upscaling, the actual TV itself, meaning the X900F, does it. However, I have a Denon X4400H as well and all my HDMIs are going into it. It supposedly has some killer 4K upscaling tech going on. My question is, will my receiver and TV be doing double duty? Do I need to turn the TV's scaling off? Is that even something I can do? I just want to make sure the video is as clean as possible.

I have an x3300, I'd choose the Sony over denon.

I've been eyeing the same soundbar especially because it is capaple of 4kHDR passthrough including Dolby Vision. I want to hook up all my devices to the TV (LG C8) and just run one HDMI cable to the soundbar. Have you had any experience with this? I read that some people had problems with passthrough but I'm not sure what kind of problems these could be. I want to eliminate having to use multiple remotes and change volume/source on different devices.

Haven't tried the passthrough yet. I know there is a soundbar menu setting that enables HDMI 2 full bandwidth (needed for HDR) and that I see separate listings on my TV for the soundbar menu and the HDMI slot, so in theory that part is covered. I imagine TV volume is still controllable using ARC, but something I need to try first...

So I have a PS4 Pro and an Xbox One X, what do I need to make sure to do to get HDR working properly on everything? I've read that the brightness sometimes is too low, do I need to max it out? Anything else I need to make sure of on the TV settings, color gamut and whatnot?

Check the RTINGS settings page. Brightness should be set according to your room lighting. In my bright living room I do use max brightness as it doesn't crush details, but even the 65 brightness setting looks good. Honestly though little to change out of the box except to make sure your console inputs are set to Game Mode picture setting, and if you have both PS4P and X1X, they should be using HDMI 2 and HDMI 3 since those are the high bandwidth ports.

Anything over 50 is considered pretty extreme by most enthusiasts for SDR content. It really depends on what you're putting your TV through, static game HUDs with vibrant colours absolutely pose a threat at that level. Now keep in mind panel variations, so many factors. But a general rule of thumb is to keep the OLED light on the down low.

Interesting. I'm only using Cinema for HDR content (80 light level), and ISF Dark for SDR (60 light level).

Does lowering light level, say for Cinema, require adjusting or compensating with other settings?

This concerns me as well. As much as I love the picture quality of my B7 I'll probably take it back and go with LED. If I wasn't a gamer I'd probably keep it.

It sounds like if you're a heavy watcher of cable news (which I am) you're fucked there too.

Is this something that you turn on and off (either on the AVR or the TV)?

Yeah you turn it on or off and mess with all kinds of settings in the AVR. The Sony (or any TV) always automatically scales content to fit its native resolution. If it didn't, you'd play 1080p content and it would literally play in a quarter of the screen and the rest would be black.

The question really is where to scale. And in that scenario, I'd be very surprised if it was the Denon.

I was almost set on getting a 75" X900F. Then i saw this video on youtube where it took 10sec just from pressing the button on the remote untill the settings menu opened.

Also when the volume was lowered/increased it was extremely laggy. Looked totally infuriating imho.

Any Sony owners here that can speak for how bad the Android TV interface really is?

Yes, getting into the picture adjustment settings and other parts of the UI can take a looong time. The UI is total garbage

Yeah you turn it on or off and mess with all kinds of settings in the AVR. The Sony (or any TV) always automatically scales content to fit its native resolution. If it didn't, you'd play 1080p content and it would literally play in a quarter of the screen and the rest would be black.

The question really is where to scale. And in that scenario, I'd be very surprised if it was the Denon.

Makes sense, thanks bud.

Interesting. I'm only using Cinema for HDR content (80 light level), and ISF Dark for SDR (60 light level).

Does lowering light level, say for Cinema, require adjusting or compensating with other settings?

Light level is "supposed" to maxed out on HDR content.

(X900F) Accessing the Picture Adjustment menu takes a variable amount of time based on what you're doing with the TV. If I just switched to 4K HDR, I timed it to 3 seconds, but then its loaded and smooth. If I accessed the menu system recently, it loads almost instantly. Using the Action menu seems to speed things up too?

If I just turned the TV on, and haven't accessed the TV menu, then yeah, I'm guessing it takes awhile to load settings. The Sony Sets backload everything so you can launch the TV and change inputs almost instantly, but then you need to wait on the other bits. Compared to the 2014 models it's perfectly smooth. But yeah maybe compared to LG it's bad? I don't need to change settings everyday, so don't notice it much

If I just turned the TV on, and haven't accessed the TV menu, then yeah, I'm guessing it takes awhile to load settings. The Sony Sets backload everything so you can launch the TV and change inputs almost instantly, but then you need to wait on the other bits. Compared to the 2014 models it's perfectly smooth. But yeah maybe compared to LG it's bad? I don't need to change settings everyday, so don't notice it much

I guess I'll just keep it at 80 lol.

I'm a little confused with game mode for SDR, light level is by default 80, so I will lower that to 50-60. But someone mentioned PC mode for SDR Game Mode, how is pc enabled?

My apologies for all the questions, I'm just used to my plasma where it was set it a forget it, I never toggled settings, ISF Night mode and that was all I needed lol.

I guess I'll just keep it at 80 lol.

I'm a little confused with game mode for SDR, light level is by default 80, so I will lower that to 50-60. But someone mentioned PC mode for SDR Game Mode, how is pc enabled?

My apologies for all the questions, I'm just used to my plasma where it was set it a forget it, I never toggled settings, ISF Night mode and that was all I needed lol.

No need to apologize, HDR makes things more complicated and these TV manufacturers do a poor job explaining the best way to watch it in my opinion. If you like it at 80, just keep it at 80.

No need to apologize, HDR makes things more complicated and these TV manufacturers do a poor job explaining the best way to watch it in my opinion. If you like it at 80, just keep it at 80.

But is it still safer than keeping it at 100, or is there no difference?

I just tried it myself, and 80 looks like the sweet spot: any lower and the picture gets darker for me, any higher and it's very minor.

Contrast looks like it needs to stay 100 for me no matter what.

Ideally, you're gonna want to leave OLED light and contrast at 100 for the best performance your TV can offer for HDR content. Cinema mode especially shouldn't look as blown out with those settings as SDR content would, also if you're 7+ you'll wanna put dynamic contrast on low to enable dynamic metadata. You can adjust your OLED light for HDR content if you please, but it's recommended to use the 100/100 for HDR to get as much out of your TV as possible. For Dolby Vision however leave the default OLED light and contrast alone, only adjust the colour temperature everything else should be perfect by default. Your OLED light for SDR can be whatever you want, but if you're in a dark room then getting about 150 nits of brightness is the recommended level to view your content at. That would be an OLED light of around 35-50 for SDR.Interesting. I'm only using Cinema for HDR content (80 light level), and ISF Dark for SDR (60 light level).

Does lowering light level, say for Cinema, require adjusting or compensating with other settings?

Hey all, I just wanted to say I've finally entered the 4K HDR era! I just picked up a Sony 75" X900F and a new Denon X4400 receiver to feed all my HDMI goodies into.

So I have a PS4 Pro and an Xbox One X, what do I need to make sure to do to get HDR working properly on everything? I've read that the brightness sometimes is too low, do I need to max it out? Anything else I need to make sure of on the TV settings, color gamut and whatnot?

Also, since I'm using the receiver to feed all my devices through, does anything need to be set through there? It does 4K/60 and does upscaling and all that for HD and SD stuff, it will pass through all the signals just fine?

edit: a pic! Spent from 7 last night till 2 am setting everything up, moving old TV, breaking down old stand, etc. Got the entertainment center from IKEA, actually really nice! New receiver, the TV, and a 4K Apple TV to go with it.

bonus, me in the reflection

Nice set up! Anyway to tuck those speaker wires into the poles of the speaker stands? They just stand out from and otherwise clean set up. For your receiver, you have to set to output 4k enhanced, just like switching the hdmi ports to enhanced on the tv itself. Make sure that the any receiver upscaling is turned off so the TV is the only thing doing the up scaling. Other than that enjoy. When I had my 900f, there was some good settings is the owners thread over at avsforum.com.

Nice set up! Anyway to tuck those speaker wires into the poles of the speaker stands? They just stand out from and otherwise clean set up. For your receiver, you have to set to output 4k enhanced, just like switching the hdmi ports to enhanced on the tv itself. Make sure that the any receiver upscaling is turned off so the TV is the only thing doing the up scaling. Other than that enjoy. When I had my 900f, there was some good settings is the owners thread over at avsforum.com.

You had the 900f too? Lol

LG OLED 2016 models...I took the plunge. Reset my HDR game mode settings...

Hallelujah! A BRIGHTER HDR GAME MODE IS BACK!!!

Nice just updated my C6 as well and the HDR Game mode now looks great without having to turn on dynamic contrast.

You turned on Enhanced HDMI in the settings for the port you're using, right?So I have a PS4 Pro and an Xbox One X, what do I need to make sure to do to get HDR working properly on everything? I've read that the brightness sometimes is too low, do I need to max it out? Anything else I need to make sure of on the TV settings, color gamut and whatnot?

Ports 2&3 are the full bandwidth ports and 3 is also the ARC port.

Had to do that on my receiver as well, not sure about if the Denon's have a similar setting.

Once you do that the Pro and X should recognize that your TV supports all the 4K options and HDR.

You need an LCD.But is it still safer than keeping it at 100, or is there no difference?

I just tried it myself, and 80 looks like the sweet spot: any lower and the picture gets darker for me, any higher and it's very minor.

Contrast looks like it needs to stay 100 for me no matter what.

You're just going to get burn in again because you leave OLED light way too high for SDR.

If you want a super bright artificial image, the Q9 is calling your name.

- Status

- Not open for further replies.