-

Ever wanted an RSS feed of all your favorite gaming news sites? Go check out our new Gaming Headlines feed! Read more about it here.

Digital Foundry: Anthem Console First Look: Xbox One X/ PS4 Pro Head-To-Head

- Thread starter Vexii

- Start date

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Threadmarks

View all 5 threadmarks

Reader mode

Reader mode

Recent threadmarks

Performance & Resolution Breakdown Eurogamer Article States near-complete build doesn't suffer performance issues of demo build PC Demo Performance and Analysis Xbox One X Runs at Native 4K New DF Video Comparing Base vs Enhanced Consoles (With Revised Findings)Definitely. But tbh we had 1080p60fps locked games on launch day, we had 4K60fps locked games on the upgrades launch as well, we've even had 60fps all the way back on NES. So the hardware is only a small part of the problem.Ryzen should help massively with framerate compared to the current Jaguar CPU's we are stuck with at the moment.

You're delusional - every thread like this you talk about DF Xbox bias. Did you miss the first 4 years of this generation? Also you forgot to mention the ROPS again for the nth time.What ? They don't even spoil the thing in their title or subtitle. This is the title or their RDR2 article:

That was even innacurate as it plays best on Pro.

This is their Resident Evil 2 title:

Now compare this with the KH3 video (the game plays best on Pro):

And now their Anthem video title (the game plays best on Pro):

You notice a pattern ?

Yeah, like I say, all those Pro owners dying to play Spiderman at 720/60 really need to be listened to.

Most games just aren't built that way.

I completely understand that not every game can hit 60fps, simply because some game engines take longer than 33ms to set up a frame.

But, it would still be really nice to have that option.

More like we need more skilled developers.

Bear in mind we're dealing with a CPU that was originally designed for mobile formats. In a lot of cases dropping as low as 720p might not alleviate framerate issues as often as one might think. This isn't the catch-all solution that a lot of people seem to think it might be.This really makes me wish that that all games on the Xbox One and Playstation 4 offered a actual resolution selector as a performance option.

Sometimes you want to prioritize framerate over resolution. Having the option to select your resolution is the best solution.

Not to say that more options is a bad thing, heck no. But it's worth remembering just how CPU bound these machines are.

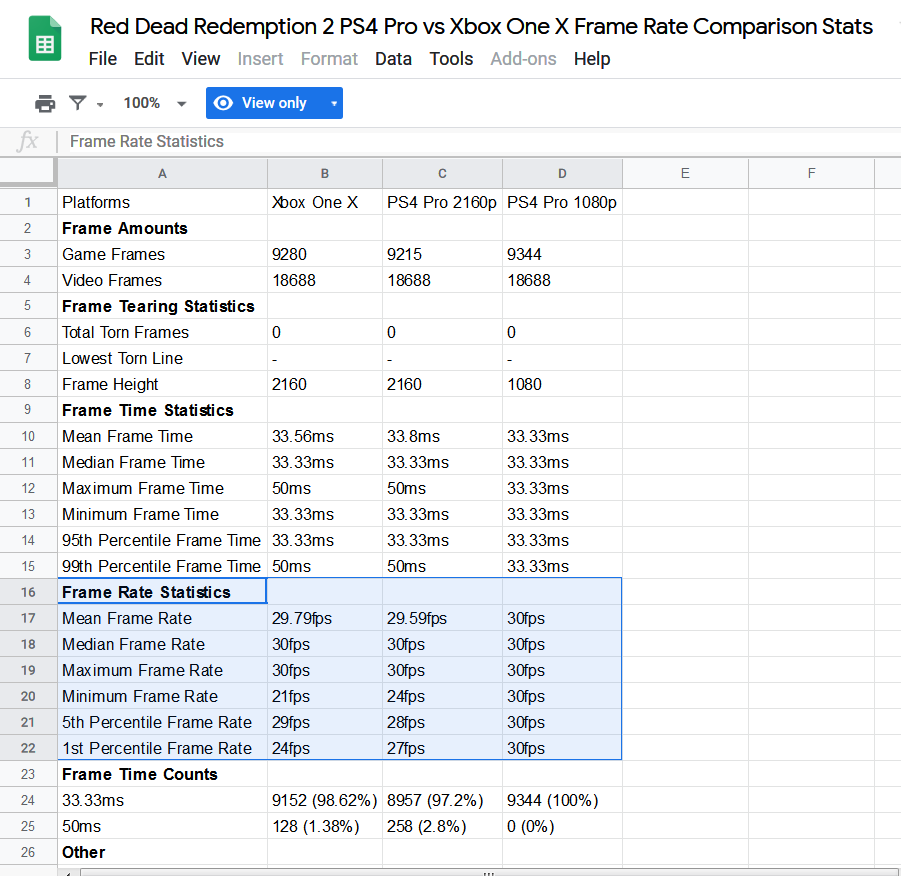

Is this reply for real? You are trying to defend forcing a game to 1080p on a console designed for 4K vs another one that runs the game more often than not at 30fps at 4 times the resolution? Like seriously?au contraire, Pro's forced 1080p mode is the only way to play RDR2 with a 100% locked 30 FPS without any drops, so that poster is right .. RDR2 does *play* the best on Pro.

https://www.youtube.com/watch?v=sOiXyBebIXQ

This shit has to stop. Plays best for RDR2 is definitely on Xbox One X, no reason to try to spin it any other way. PS4 Pro was designed for 4K, if you have to force a game to 1080p then the system itself has failed at delivering the experience it's being marketed for.

If you want to say it runs better on PS4 vs Xbox One S, sure, go for it. But Xbox One X and PS4 Pro are to be compared at 4K as that is their main selling points.

As for Anthem, BioWare needs to get their shit together, as tons of games run at higher res in X with more stable frame rates than Anthem. I'm not planning on buying this game as I'm more interested by The Divisin 2, but it'lol be a shame if this performance problem isn't resolved by the time the game is released.

Definitely. But tbh we had 1080p60fps locked games on launch day, we had 4K60fps locked games on the upgrades launch as well, we've even had 60fps all the way back on NES. So the hardware is only a small part of the problem.

Oh yeah, it's still down to the developers choice but a lot seem to be pushing for high graphics and decent framerates, especially since Pro and X came out, and with loads of games being multiplatform these days, they shouldn't have to tone down the console versions as much, if the game is on PC too.

Ryzen should be great for all platforms going forward.

It's a worthy trade off when I'm on PC and I prioritize performance on Overwatch to go from 90 to 200fps to make sure I have the most stable performance at 1440p. But to say you lower 4 times the resolution because you gain 10fps and a much smoother experience is a complete joke and is barely noticeable . It is far from a worthy trade off, especially on a PVE game like RDR2 or even KH3.You'd be surprised at how some people can be sticklers for performance over visuals. It's a worthy trade off for some.

The demo was a mess performance-wise. Base PS4 was awful with noticeable drops all the time and the PC was super inconsistent despite my relatively powerful hardware (8600k OC'd & 1070ti). Like seriously, I put everything on low just to make sure I wasn't hitting bottlenecks and it didn't make any difference. On top of all of that, I was getting these massive framerate drops seemingly out of nowhere, even when nothing crazy was happening.

It is a demo after all, so hopefully the final game is much better.

Never understood this mentality.

Did you not buy Destiny or Assassin's Creed Unity or any other parity'd game from 2013 - 2017 either?

It is a demo after all, so hopefully the final game is much better.

Really hope the final game is much better than this.

No buy if there is a parity between consoles.

Never understood this mentality.

Did you not buy Destiny or Assassin's Creed Unity or any other parity'd game from 2013 - 2017 either?

Looking forward to seeing the final game comparison. If they can make good improvements on the X I'll pick it up. Im not playing a 20fps game in 2019.

You didn't buy Burnout Paradise or Dead Space last gen? :-(Really hope the final game is much better than this.

No buy if there is a parity between consoles.

So you can grab a couple of frames advantage at the cost of visuals for what, a couple of games? I don't think they'll bother.

No one would object to a choice given to the user, no ?

>says consoles are holding back pcConsoles are still holding PC back, I see. Well I know, you can't create a PC game that focuses on that 5% of people using a GTX1080 or above, sadly.

>says that pc gamers are holding back pc

Which is it, huh? 😉

It's as real as replies like this:

Really hope the final game is much better than this.

No buy if there is a parity between consoles.

People getting upset over parity will never get not-weird. It was weird when AC Unity was supposedly held back on PS4 for parity with XBO, it's as weird now when people comment games like Anthem or RE2 are intentionally held back on one console because of parity.

The demo was a mess performance-wise. Base PS4 was awful with noticeable drops all the time and the PC was super inconsistent despite my relatively powerful hardware (8600k OC'd & 1070ti). Like seriously, I put everything on low just to make sure I wasn't hitting bottlenecks and it didn't make any difference. On top of all of that, I was getting these massive framerate drops seemingly out of nowhere, even when nothing crazy was happening.

It is a demo after all, so hopefully the final game is much better.

Never understood this mentality.

Did you not buy Destiny or Assassin's Creed Unity or any other parity'd game from 2013 - 2017 either?

Is it really difficult to understand?

My money. I don't support parity, Crappy ports and other sort of things that devs do.

Is it really difficult to understand?

My money. I don't support parity, Crappy ports and other sort of things that devs do.

Why do you assume there's forced parity though ?

Yes. I noticed this too and it's odd. I was thinking it was due to the fact that we've looked at a lot of big Japanese titles (it's obvious Xbox isn't terribly important to that audience) but Anthem is bizarre.Is it just me or have the last few games analyzed by DF performed better on the Pro compared to the X. As someone that owns an Xbox One X that seems a bit concerning.

Demo performance on PS4 Pro was bad enough for me to cancel my preorder. I'll play it when they've perhaps optimized it further.

Demo performance on X reminded me off stuff i've played in 'Game preview'. Ugly IQ, choppy performance. Maybe it's personal taste, but I find the actual aesthetic (right down to the menus) drab and uninspired.

The playstation pro is the True 1080p console of the generation!

Hyperbole, but honestly it's pretty damning that a console that was pegged as a mid gen console for 4k TV's (and is advertised as much by Sony) is better at 1080P.

I mean, the same is true for very high end PCs. You'll always get higher framerates at lower resolutions, it's just how things work.

Is it really difficult to understand?

My money. I don't support parity, Crappy ports and other sort of things that devs do.

Okay fine.

You didn't answer my question though: Did you not buy Destiny or Assassin's Creed Unity (or other titles that had parity years ago) either?

Well at least even an 2080Ti isn't fit for 60FPS(drops down to 30 FPS when there's a lot of action happening) at 4k With the engine, which is pretty disappointing for a 1200 Dollar Card:>says consoles are holding back pc

>says that pc gamers are holding back pc

Which is it, huh? 😉

https://www.youtube.com/watch?v=XD7jFk1uIEQ

I honestly don't think the next gen consoles will be able to deliver next gen engines at 60fps@4k if this game is already so taxing...

Yes. I noticed this too and it's odd. I was thinking it was due to the fact that we've looked at a lot of big Japanese titles (it's obvious Xbox isn't terribly important to that audience) but Anthem is bizarre.

KH3 runs at higher resolution and framerate on X against Pro when played how the developers intended. Not a great example of developers not being interested in Xbox tbf.

It's as real as replies like this:

People getting upset over parity will never get not-weird. It was weird when AC Unity was supposedly held back on PS4 for parity with XBO, it's as weird now when people comment games like Anthem or RE2 are intentionally held back on one console because of parity.

It's as weird as your attitude .Just because you except it and settle for less doesn't mean everyone has to. You do you and let other do theirs.

You expect me to throw my money and support inferior ports on a better hardware?

I vote with my wallet.

Last edited:

Okay fine.

You didn't answer my question though: Did you not buy Destiny or Assassin's Creed Unity (or other titles that had parity years ago) either?

Nope, I didn't.

It's as weird as your attitude. Just because you except it and settle for less doesn't mean everyone has to. You do you and let other do theirs.

You expect me to throw my money and support inferior ports on a better hardware?

I vote with my wallet.

As we all should, however your posts are implying there is forced parity or bioware is deliberately holding back one version of the game over the other ...

Purely not buying the game because in general it's performance sucks, that I completely understand, but you specifically pointed out 'parity' and shallow ports.

Why are you assuming this, is what I would like to know. Just seems a bit shallow to be this salty over perceived forced parity, when there most likely is none.

Oh, I was thinking more of Ace Combat 7 and RE2 (which is slightly faster on X overall but slower in some scenes - it's also softer).KH3 runs at higher resolution and framerate on X against Pro when played how the developers intended. Not a great example of developers not being interested in Xbox tbf.

The workaround on PS4 to boost framerate in the cases of KH3 and RDR2 is hardly presented as an option though. If Sony want to shout from the rooftops that you have to drop Pros Res down to it's bare minimum to achieve good framerate then they should go for it, I can't see it happening though.

As we all should, however your posts are implying there is forced parity or bioware is deliberately holding back one version of the game over the other ...

Purely not buying the game because in general it's performance sucks, that I completely understand, but you specifically pointed out 'parity' and shallow ports.

Why are you assuming this, is what I would like to know. Just seems a bit shallow to be this salty over perceived forced parity, when there most likely is none.

Am not salty bro, I'm addressing all sort of things in general here, bad ports, force parity etc. I just don't support these things.

They had nice paper on analytical AA while a ago.Looking back at Mass Effect Andromeda screenshots I took, I see similar checker board-like artifacts but to a much much lesser degree than in Anthem. So yes it could something else going on here.

Also curious to learn what "Ultra" AA is doing under the hood. Maybe TAA+SMAA/FXAA?

Could be something completely different though, there is lot of choices.

Was last gen hardware like this gen?

Okay that's fair then.

Forgive me, I thought it was a "team" thing.

So both shared the same vendor and were like Pro and X?

I think that's irrelevant

Okay that's fair then.

Forgive me, I thought it was a "team" thing.

It's not about a team bro. I don't participate in teams or system wars. None of those companies gives a crap about me.

I own PS4, Pro, One, S, X, and an incredibly expensive PC.

The workaround on PS4 to boost framerate in the cases of KH3 and RDR2 is hardly presented as an option though. If Sony want to shout from the rooftops that you have to drop Pros Res down to it's bare minimum to achieve good framerate then they should go for it, I can't see it happening though.

This is true, the PS4 Pro solution is hardly elegant. But at least it is something a user can do. The XBX is always down scaling from a higher resolution and while it works great in most cases .. we at least have a case of two where there's users asking for a native 1080p mode. Considering how user-friendly MS has been, it shouldn't be a big ask for them to have some kind of option for users who may want to disable forced super sampling.

How is that? You have the Pro and X which are almost the same only one is more capable than the other. Then you have 360 and PS3 which are west and east. How is it that they are the same?

It's a worthy trade off when I'm on PC and I prioritize performance on Overwatch to go from 90 to 200fps to make sure I have the most stable performance at 1440p. But to say you lower 4 times the resolution because you gain 10fps and a much smoother experience is a complete joke and is barely noticeable . It is far from a worthy trade off, especially on a PVE game like RDR2 or even KH3.

Considering that RDR2 has 11 frames input lag I'd say it has other problems than losing a frame once in a blue moon.

Personally I played it in 4k mode on the pro and didn't really notice frame drops anywhere but then again I'm not _that_ sensitive to frame rate either.

I tried 1080p mode but I experienced exactly no difference except for worse grahpics. To me it actually seemed to lose detail not just from lower resolution but objects / textures themselves as if the game ran on a normal ps4.

So I tend to agree with you :)

Considering that RDR2 has 11 frames input lag I'd say it has other problems than losing a frame once in a blue moon.

Personally I played it in 4k mode on the pro and didn't really notice frame drops anywhere but then again I'm not _that_ sensitive to frame rate either.

I tried 1080p mode but I experienced exactly no difference except for worse grahpics. To me it actually seemed to lose detail not just from lower resolution but objects / textures themselves as if the game ran on a normal ps4.

So I tend to agree with you :)

I also played RDR2 in the Pro's 4K mode and I thought it looked and played just fine, but with KH3 whenever I get it, I'm leaning very heavily toward forcing 1080p and get the near-locked 60 FPS cause the 30 FPS mode has severe frame pacing issues and the unlocked mode is hovering around 40 which would seem very inconsistent.

Hell I'm thinking of just bringing up the Pro from the TV room and connect it to my PC monitor I use my gaming laptop on. The monitor's 27'' and on a smaller screen the loss of IQ will probably be a lot less apparent while the improvement in performance will be more so.

I also played RDR2 in the Pro's 4K mode and I thought it looked and played just fine, but with KH3 whenever I get it, I'm leaning very heavily toward forcing 1080p and get the near-locked 60 FPS cause the 30 FPS mode has severe frame pacing issues and the unlocked mode is hovering around 40 which would seem very inconsistent.

Hell I'm thinking of just bringing up the Pro from the TV room and connect it to my PC monitor I use my gaming laptop on. The monitor's 27'' and on a smaller screen the loss of IQ will probably be a lot less apparent while the improvement in performance will be more so.

I haven't tried KH3 yet but I will once I eventually finish KH1 + 2. Some day..

But for that I am also leaning towards 1080p mode from what I have seen.

I might be frame rate insensitive, but not that insensitive!

The developers hinted that they might try to optimize for 60fps post-launch but considering the current performance and what seems to be an absolute refusal to respect the game's render targets, I very much doubt that will ever happen.

I couldn't tolerate the poor console performance in the demo. Not for a shooter like this. 20-30 is too low, felt horrible to play. I tried it on PC and got 40-60 depending on the area, which I could tolerate much easier.

I think I'm probably skipping out on launch to wait and see, but if I ever do end up getting this it will most likely be for PC. Even if they get X locked 30fps, I think this kind of game needs higher. Some games play okay at 30, others don't feel so good.

I think I'm probably skipping out on launch to wait and see, but if I ever do end up getting this it will most likely be for PC. Even if they get X locked 30fps, I think this kind of game needs higher. Some games play okay at 30, others don't feel so good.

That's not the issue here. If that's the case then last-gen parity makes less sense, huh?How is that? You have the Pro and X which are almost the same only one is more capable than the other. Then you have 360 and PS3 which are west and east. How is it that they are the same?

Most PCs struggle with this game as it is lol

Threadmarks

View all 5 threadmarks

Reader mode

Reader mode