Yeah, it's likely Gonzalo is PS5.honestly i have no idea what else could it be, for example i heard the suggestions of a chinese console, but subor wasnt nearly as high end as this thing is. and it mostly fits with all the rumors we heard so far. the only problem is the linux driver but it could just be an accident that they added a reference in there.

-

Ever wanted an RSS feed of all your favorite gaming news sites? Go check out our new Gaming Headlines feed! Read more about it here.

-

We have made minor adjustments to how the search bar works on ResetEra. You can read about the changes here.

Next-gen PS5 and next Xbox speculation launch thread |OT5| - It's in RDNA

- Thread starter Mecha Meister

- Start date

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

- Status

- Not open for further replies.

Threadmarks

View all 7 threadmarks

Reader mode

Reader mode

Recent threadmarks

Colbert's Next Gen Predictions anexanhume's performance and die-size estimation OP - I GOT, I GOT, I GOT Colbert's HDD vs SSD vs NVME Speed Comparison: Part 1 Colbert's HDD vs SSD vs NVME Speed Comparison: Part 2 Colbert's thoughts about NAVI GPU setups for next gen consoles Liabe Brave's Project Scarlet Memory Configuration SpeculationYeah but thats only syntetic benchmark in which gtx1080 and vega64 has little better score but in real games perf is worseit gets me really excited because it suggests performance above my previous expectations (i expected in line or below the RTX 2070 while these tests suggests slighly above)

gonna come in 2nd in the console war confirmed.

Seems like ray tracing will feature big league on PS5.

Interesting. Strange that we dont have anything on Skarlett APU. Its more strange that we have presumably ps5 chip in there toono, they only backtracked from navi 12 LITE and 21 LITE being console APUs because he wasnt sure.

wait wait wait.

Seems like ray tracing will feature big league on PS5.

" According to Viglione, the AMD Zen 2 available to the console could allow a wide diffusion in the next-gen titles of the use of Ray Tracing technology. "

why.... is he saying that in relation to the CPU?

Probably he knows shit but good way to promote some indie studio ;dwait wait wait.

" According to Viglione, the AMD Zen 2 available to the console could allow a wide diffusion in the next-gen titles of the use of Ray Tracing technology. "

why.... is he saying that in relation to the CPU?

honestly i have no idea what else could it be, for example i heard the suggestions of a chinese console, but subor wasnt nearly as high end as this thing is. and it mostly fits with all the rumors we heard so far. the only problem is the linux driver but it could just be an accident that they added a reference in there.

Not to mention why should Apisak explicitly mention PS4 (and not something else instead) in the same tweet and compare it directly to Gonzalo score...i'm sure he knows more then what he is willing to say publicly, which is of course understandable.

considering that the GPU in gonzalo seems to perform on par, or even better than the RX 5700 XT that AMD has reported, i wonder if sony did some heavy modifications to the navi 10 chip to maximize performance.

we have to consider the fact that the person who accurately leaked navi from wccftech reported that navi was made for sony in this, because the GPU seems to be punching above its weight.

we have to consider the fact that the person who accurately leaked navi from wccftech reported that navi was made for sony in this, because the GPU seems to be punching above its weight.

It's on pair with vega 64 so its bellow 5700xt level edit: little higher according to guru3d, but for sute not above what amd told us about 5700xtconsidering that the GPU in gonzalo seems to perform on par, or even better than the RX 5700 XT that AMD has reported, i wonder if sony did some heavy modifications to the navi 10 chip to maximize performance.

we have to consider the fact that the person who accurately leaked navi from wccftech reported that navi was made for sony in this, because the GPU seems to be punching above its weight.

I dont believe that story of Navi for Sony, but for speculation sake - is 10 in "Navi 10" stands for the time the chip was developed? If so than it means that the oldest Navi APU is Gonzalo, right? And if Gonzalo is ps5 than it can explain this rumorconsidering that the GPU in gonzalo seems to perform on par, or even better than the RX 5700 XT that AMD has reported, i wonder if sony did some heavy modifications to the navi 10 chip to maximize performance.

we have to consider the fact that the person who accurately leaked navi from wccftech reported that navi was made for sony in this, because the GPU seems to be punching above its weight.

Smaller isn't always better (except with Xbox One X where it actually is). PS4/PS4 Pro really have cooling issues that their Xbox counterpart doesn't have, probably because Microsoft made sure to not have a new RROD on their hands and went a bit overkill with OG Xbox One.I'm not sure what that means? They released the most powerful console at the start of the generation in a much smaller form factor than the competition. The PS4 is a great system, I don't quite get what Sony have to make up for?

considering that the GPU in gonzalo seems to perform on par, or even better than the RX 5700 XT that AMD has reported, i wonder if sony did some heavy modifications to the navi 10 chip to maximize performance.

we have to consider the fact that the person who accurately leaked navi from wccftech reported that navi was made for sony in this, because the GPU seems to be punching above its weight.

This is one of the things that always felt weird, it's like there was some really big investment behind the Navi arch when compared to any before at AMD... Way above it's weight for sure especially if you look at those engineering sample clocks

Would be quite interesting if Sony is the one that releases 2 sku

$399 10tf version

$599 14.2tf version

I think the Internet wold explode lol

Colbert, If this happens, I say it's a truce

LOL. I don't believe that will happen. There aren't even rumors that Sony is working on high and low end versions of PS5.

It's easy. People are chill because when they are logged out reading this thread, everything is white and in lighter mood. But when they log in, everything seems darker and people automatically are in bad mood.

$X99 is the sweet spot though. Consumers see a price like $X29 and $X49 and are more aware of the first digit. The psychology of $X99 just works too well for initial pricing.

We could have $449 at launch for the hardcore, and then it will be easier to reach $399 with just one price drop, a year/year and a half after launch

I would appreciate if you would tag me with an @ so I get a notice. That I saw your comment here was more luck than anything else :pWould be quite interesting if Sony is the one that releases 2 sku

$399 10tf version

$599 14.2tf version

I think the Internet wold explode lol

Colbert, If this happens, I say it's a truce

If the TF of a SKU is >= your bet (12.9), you win! Regardless if there is a less performant SKU.

He is way too often put into this thread for my taste. I agree.

The APU is not in a list of public drivers, the GPU, which is contained in the APU, is. Very important distinction.Gonzalo (Navi 10 lite) is not PS5. That APU is in a public list of Linux drivers. This is a dead end.

I would appreciate if you would tag me with an @ so I get a notice. That I saw your comment here was more luck than anything else :p

If the TF of a SKU is >= your bet (12.9), you win! Regardless if there is a less performant SKU.

He is way too often put into this thread for my taste. I agree.

What do you make of 20k Gonzalo score?

Wasn't the 1.8Ghz chip have a different GPU ID that doesn't match with Navi10 Lite or am I remembering wrong?The APU is not in a list of public drivers, the GPU, which is contained in the APU, is. Very important distinction.

it switched GPU ID because 13E9 was for the engineering sample and 13F8 is the quality sample, but komachi lists both as Ariel (navi 10 LITE).Wasn't the 1.8Ghz chip have a different GPU ID that doesn't match with Navi10 Lite or am I remembering wrong?

You don't believe Sony has collaborate with AMD for NAVi exactly for what reason? Lisa Su has reported it many times. If I'm not wrong she has defined Cerny a genius after such collaboration.I dont believe that story of Navi for Sony, but for speculation sake - is 10 in "Navi 10" stands for the time the chip was developed? If so than it means that the oldest Navi APU is Gonzalo, right? And if Gonzalo is ps5 than it can explain this rumor

it switched GPU ID because 13E9 was for the engineering sample and 13F8 is the quality sample, but komachi lists both as Ariel (navi 10 LITE).

I'm sorry if I missed it, but has he ever provided any kind of evidence or logical explanation for linking Gonzalo to Ariel?

Why are we focusing on what the PS4 is? We have the Gonzalo score, so why don't we just compare that to other off the shelf PC onfigs?

Ariel was originally listed as 13E9 (which was leaked before to be navi 10 LITE), then gonzalo was found with 13E9 GPUI'm sorry if I missed it, but has he ever provided any kind of evidence or logical explanation for linking Gonzalo to Ariel?

I did compare to other configs, with a cpu that will likely be roughly equivalent to the PS5, gonzalo performs better than RTX 2070 and at the very least equal to Vega 64.Why are we focusing on what the PS4 is? We have the Gonzalo score, so why don't we just compare that to other off the shelf PC onfigs?

As implied Vega 64 performs better than 2070 in this test which means its not perfectly accurate to in game performance.

But its still very promising, navi is supposed to be much better for gaming so maybe with the sony specific modifications the GPU ends up performing better than RTX 2070, which is a very good result.

Last edited:

TheThreadsThatBindUs

I am not on the list of the enlightened members you put up (and right so) but a FLOP calculation is based on the HW resources that is available to you and not a number how effective you use those HW resources. That is why many people including me always say it is theoretical.

I was referencing those most likely to have an in-depth knowledge of the GCN microarch., e.g. the devs among us and those very clued up on the technical details of GPUs and rendering.

I appreciate the response, but Locuza's explanation was more what I was looking for.

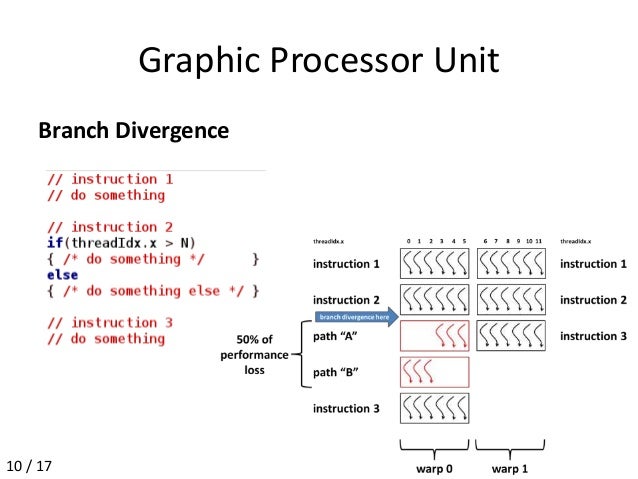

In general the real benefit with RDNA is the flexibility.

Depending on the code Wave32 or Wave64 is better.

AMD since at least the R600 from 2007 used Wave64, while Nvidia uses Wave32.

Intel is much more flexible, they have (had) Wave4x2 (not anymore iirc) , Wave8, Wave16 and Wave32.

From the energy perspective it's more efficient to have one program pointer for 64 items and issuing 64 items vs. 2x32.

You pay less front-end overhead for larger Wavefronts than for smaller one.

There is also code where you might have less computing overhead with larger Wavefronts than with smaller ones.

On the other side large Wavefronts take up more registers and have a longer resource lifetime if the architecture isn't computing all elements at once.

Also branching costs are (much) higher with larger Wavefronts:

In the worst case only one thread of 64 might do something, so the unit utilization is at 1/64 while with Wave32 it is at least at 1/32.

With RDNA AMD has the choice to use either Wave mode depending on when it's more efficient in comparison to the other one.

In comparison to GCN RDNA brings some pros and cons.

For Wave64 the pro stuff is that AMD can change quicker to a new Wavefront, also the register-file per SIMD for one Wavefront is now 128KB large in contrast to 64KB.

A negative aspect is the latency, for dependent instructions RDNA needs 5 clock cycles, so you would need at least three Wave64 to mask the waiting time.

GCN can be occupied with just one Wave64.

But all of that is very low level stuff and depends on a lot of factors.

Especially for a layman it's hard to say how much of a benefit or disadvantage X vs. Y is in practise.

Thanks Locuza. I'm very grateful for the detailed explanation. It's very enlightening.

What issues? I read about some sticks peeling after long use but the next batches seemed to fix that issue.

I don't recall ever having an issue with my DS4's to be honest. I use them everyday for PS4 and PC.

If you only visit Era, you'd be given the mistaken impression that PS4 controller build quality is exceptionally poor. In reality, however, outside of the stick rubber issue which was fixed with the second gen, they aren't bad at all.

In fact Xbox 1 controllers are notorious for connectivity issues. You just don't see it posted here very often.

honestly i have no idea what else could it be, for example i heard the suggestions of a chinese console, but subor wasnt nearly as high end as this thing is. and it mostly fits with all the rumors we heard so far. the only problem is the linux driver but it could just be an accident that they added a reference in there.

"I can't think of what else it could be, therefore it must be X"

If our legal system worked by logic like that the number of incarcerated innocents would balloon to US minority levels.

Way to go with cryptic tweets.

What benchmark has regular Ps4 at 5000.

And Gonzalo APU seems week in comparison with only 4x the points.

Its not like this is my only reason, there is a bunch of evidence to support this."I can't think of what else it could be, therefore it must be X"

If our legal system worked by logic like that the number of incarcerated innocents would balloon to US minority levels.

Nice Way to jump into conclusions.Way to go with cryptic tweets.

What benchmark has regular Ps4 at 5000.

And Gonzalo APU seems week in comparison with only 4x the points.

Its not scaling linearily.

https://www.guru3d.com/articles_pages/asus_turbo_geforce_rtx_2070_8gb_review,20.html not weak at allWay to go with cryptic tweets.

What benchmark has regular Ps4 at 5000.

And Gonzalo APU seems week in comparison with only 4x the points.

Its not like this is my only reason, there is a bunch of evidence to support this.

Nice Way to jump into conclusions.

Its not scaling linearily.

So if it's not a linear scale and it's not the 4x that 5000 vs 20000 would suggest, how much better is it really?

vega64 levelSo if it's not a linear scale and it's not the 4x that 5000 vs 20000 would suggest, how much better is it really?

As i said in my previous posts, assuming a ryzen 7 2700 is equivalent in performance to gonzalo CPU, it performs above rtx 2070 and on the same level or better than vega 64 in this test (this 3dmark test has better performance on a vega 64 than rtx 2070 so its not perfectly indicative of in game performance)So if it's not a linear scale and it's not the 4x that 5000 vs 20000 would suggest, how much better is it really?

What conclusion?Its not like this is my only reason, there is a bunch of evidence to support this.

Nice Way to jump into conclusions.

Its not scaling linearily.

Microsoft claims 4x power over Xbox One X

4x power over regular Ps4 and not Ps4 pro is weak.

If you thought that ps5 will be much stronger than vega 64 with strong 8 core cpu than you had unrealistic expectation.What conclusion?

Microsoft claims 4x power over Xbox One X

4x power over regular Ps4 and not Ps4 pro is weak.

Last edited:

the 4x claim by MS is for the CPU.What conclusion?

Microsoft claims 4x power over Xbox One X

4x power over regular Ps4 and not Ps4 pro is weak.

The result of 3dmark doesnt scale linearily and it has a better result than the rtx 2070. Not like you read any of my words and you only use that "4x increase" when that is not how it works...

What conclusion?

Microsoft claims 4x power over Xbox One X

4x power over regular Ps4 and not Ps4 pro is weak.

Yeah.. thread was good again.. and then it came....

What conclusion?

Microsoft claims 4x power over Xbox One X

4x power over regular Ps4 and not Ps4 pro is weak.

It performs close to the RTX 2080 whitch cost around 700$, prety good to me.

As i said in my previous posts, assuming a ryzen 7 2700 is equivalent in performance to gonzalo CPU, it performs above rtx 2070 and on the same level or better than vega 64 in this test (this 3dmark test has better performance on a vega 64 than rtx 2070 so its not perfectly indicative of in game performance)

If I remember correctly, that would be more like 4x CPU and 2x GPU compared to the X, correct? And thus like 6x the PS4 GPU, speaking roughly?

Well, that's an interesting perspective you got there. lolMicrosoft claims 4x power over Xbox One X

4x power over regular Ps4 and not Ps4 pro is weak.

We could have $449 at launch for the hardcore, and then it will be easier to reach $399 with just one price drop, a year/year and a half after launch

Even for the hardcore, $449 is still a weird price. That and the idea of actively planning a price drop like that seems like a move of desperation. It'd make sense to know when you could drop the price in terms of manufacturing costs, but Sony has shown this gen that they're willing to hold out as long as possible.

So if it's not a linear scale and it's not the 4x that 5000 vs 20000 would suggest, how much better is it really?

Jeremy Bearimy

How dare you miss the i

That was like 70% of the joke

FOR SHAME

i mean it seems very clear that this person is wrong, but I don't actually know why that is? i guess Microsoft is probably using their own metrics that dont translate directly to this scale, correct?

What an awful news to wake up to. So many ups and downs, mostly downs. This thing is like a bad game of thrones episode.wouldnt that just be equal to 7.2TF in GCN flops? in that case then its impossible that gonzalo is PS5.

unless if i am confusing something here.

Actually 7 gcn tflops is like the red wedding.

Its not like this is my only reason, there is a bunch of evidence to support this.

Care to produce some?

As far as I can tell, Komachi intepreted the Gonzalo APU to be linked to the Ariel codename in the PCIE database. Then he tweeted something along the lines of "Ariel (PS5?)".

The Ariel <====> PS5 link was never expantiated on, was given no further elaboration by Komachi and no other evidence to support that conclusion was provided by Komachi or anyone else.

People have just been working under the assumption that Ariel=PS5 because "shit, I can't think of what else it could be".

Then you have those presuming Ariel must be PS5 because Arden and Argalus are Anaconda and Lockheart... so, you know... it fits! (All rational logic be damned)

But then later when hmqgg confirmed Arden and Argalus have nothing to do with Scarlett, that kinda shot down the whole Ariel=PS5 premise along with it.

So unless you're privy to more insider info. you haven't shared, I don't see any evidence to support a Gonzalo-PS5 link.... only Era posters doing what they do best and laying more and more assumption, speculation and conjecture on top of itself and then treating it as fact.

What conclusion?

Microsoft claims 4x power over Xbox One X

4x power over regular Ps4 and not Ps4 pro is weak.

3Dmark firestrike is a specific benchmark test. It's not an indicator of overall GPU performance, but relative performance on a very specific workload.

Using those results to work out potential Gonzalo TF numbers is more facepalm worthy than the daft Anaconda=Vega speculation (not saying you were doing this, but I did see it from others).

Vega 64 has 7x more flops than ps4 gpu, but i am not sure if there is any IPC increase between these GCN generations to know if it is even higher.If I remember correctly, that would be more like 4x CPU and 2x GPU compared to the X, correct? And thus like 6x the PS4 GPU, speaking roughly?

If the ps5's GPU does end up performing on par or better than the rtx 2070 like this test suggests, then we are talking about even around 8x faster in real time performance.

Time to cheer you up, i was wrong with that post and after further comparisons the result seems pretty great.What an awful news to wake up to. So many ups and downs, mostly downs. This thing is like a bad game of thrones episode.

Actually 7 gcn tflops is like the red wedding.

1) CPU base clock matches PS4, PS4Pro also has 1.6ghz "base clock" even though its a consoleCare to produce some?

As far as I can tell, Komachi intepreted the Gonzalo APU to be linked to the Ariel codename in the PCIE database. Then he tweeted something along the lines of "Ariel (PS5?)".

The Ariel <====> PS5 link was never expantiated on, was given no further elaboration by Komachi and no other evidence support that conclusion was provided by Komachi or anyone else.

People have just been working under the assumption that Ariel=PS5 because "shit, I can't think of what else it could be".

Then you have those presuming Ariel must be PS5 because Arden and Argalus are Anaconda and Lockheart... so, you know... it fits! (All rational logic be damned)

But then later when hmqgg confirmed Arden and Argalus have nothing to do with Scarlett, that kinda shot down the whole Ariel=PS5 premise along with it.

So unless you're privy to more insider info. you haven't shared, I don't see any evidence to support a Gonzalo-PS5 link.... only Era posters doing what they do best and laying more and more assumption, speculation and conjecture on top of its and then treating it like a fact.

3Dmark firestrike is a specific benchmark test. It's not an indicator of overall GPU performance, but relative performance on a very specific workload.

Using those results to work our potential Gonzalo TF numbers is more facepalm worthy than the daft Anaconda=Vega speculation (not saying you were doing this, but I did see it from others).

2) ariel was listed near PS4's APU

3) it was found gonzalo switched to QS mode a week before the wired article, suspicious timing

4) if point 1 is wrong and is not representative of base clock, then it is revision number and fits perfectly with PS4's revision numbering scheme.

5) Navi 10 LITE was the first PCI ID found for a Navi GPU, and the wccftech journalist who accurately leaked navi details said that "navi was made for sony", so it makes sense Navi 10 LITE was the sony chip.

There might be more i am forgetting, and i know none of these are conclusive, but there is at least enough points hinting at it for us to talk about the possibility.

Last edited:

It's not perfectly linear, sure.

But also not that far off. RX580 has 57% Firestrike score of RTX2080 and in games RX580 is about ~45% of RTX2080.

If we factor in more raw computional power for RX580 and how those cards always perform better in synthetic benchmarks, the scores make sense.

Threadmarks

View all 7 threadmarks

Reader mode

Reader mode

Recent threadmarks

Colbert's Next Gen Predictions anexanhume's performance and die-size estimation OP - I GOT, I GOT, I GOT Colbert's HDD vs SSD vs NVME Speed Comparison: Part 1 Colbert's HDD vs SSD vs NVME Speed Comparison: Part 2 Colbert's thoughts about NAVI GPU setups for next gen consoles Liabe Brave's Project Scarlet Memory Configuration Speculation- Status

- Not open for further replies.