Microsoft Xbox Series X's AMD Architecture Deep Dive at Hot Chips 2020

AMD's next-gen console APUs come out of hiding.

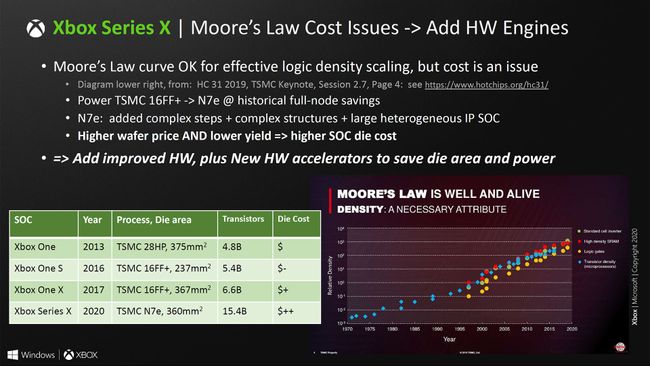

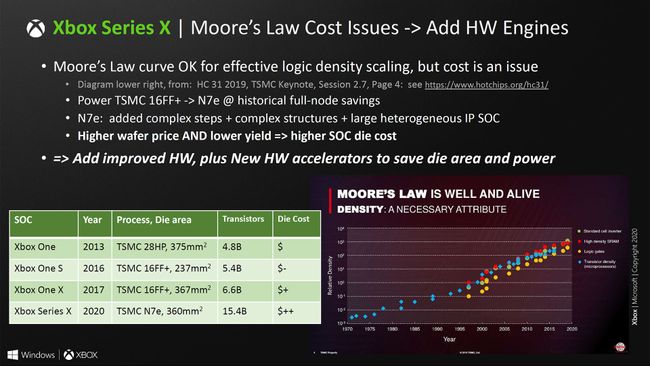

EDIT 7nm is expensive APU is more expensive than Xbox One X

Last edited:

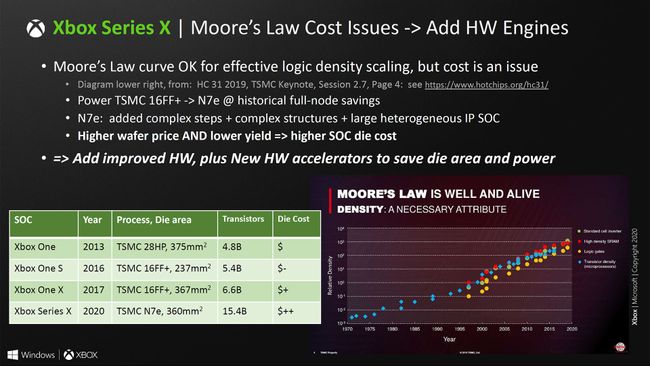

The presentation also takes some times to discuss the decreased difficulties in chip scaling relative to Moore's Law. While the chip size of the Xbox Series X is in line with previous console hardware (375mm square for the Xbox One in 2013, 367mm square for the Xbox One X in 2017), and transistor counts have more than doubled relative to the Xbox One X (6.6 billion to 15.4 billion), the die cost is higher. Microsoft doesn't specify how much higher, but lists "$" as the cost on the Xbox One and Xbox One S, "$+" for the Xbox One X, and "$++" for the Xbox Series X. As we've noted elsewhere, while TSMC's 7nm lithography is proving potent, the cost per wafer is substantially higher than at 12nm.

This quote from Tom's Hardware is something I think we need to keep in mind going forward. The SOCs are costing more even though they're on smaller processes. We're no longer at a point were a smaller process means cost savings.

It makes me question the feasibility of mid-gen consoles as purely revolving around horsepower this gen and not requiring something else (like how tensor cores allow for DLSS on Nvidia GPUs for additional results than what "increased perf" could provide alone)This quote from Tom's Hardware is something I think we need to keep in mind going forward. The SOCs are costing more even though they're on smaller processes. We're no longer at a point were a smaller process means cost savings.

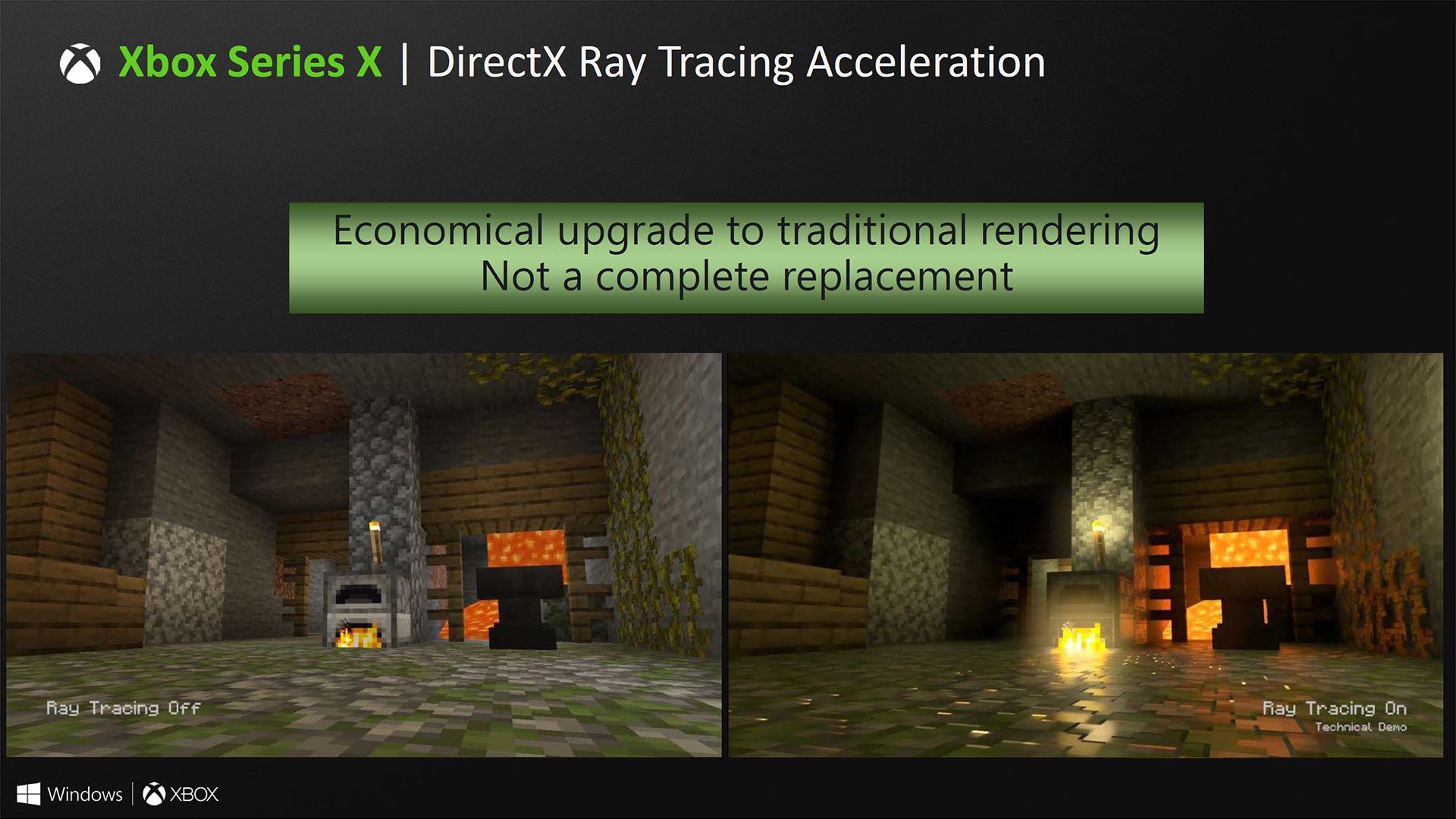

There are rt slides ?!Once again, nothing about RT as a feature is ever a main talking feature since console reveals. RT this coming gen for consoles are going to be underwhelming imo due to performance hits.

?? Ratchet, Spiderman, Pragmata and GT7 have ray traced reflections. GT7 has reflections at native 4k 60 fps.Once again, nothing about RT as a feature is ever a main talking feature since console reveals. RT this coming gen for consoles are going to be underwhelming imo due to performance hits.

I think RT implementation will be far more nuanced on 9th-gen consoles than what the first wave of RT titles on RTX 20 offered in terms of options (low to ultra). But that's the strength of console development, being able to tailor specifically for the platform.Once again, nothing about RT as a feature is ever a main talking feature since console reveals. RT this coming gen for consoles are going to be underwhelming imo due to performance hits.

Oh, I was wondering why there was nothing much in the link when I skimmed it. Looking forward to it.The actual presentation is at 6:30 PM PST for anyone wondering

RT is still in it's infancy in this industry and it's unwise to expect too much. Still, that doesn't mean it'll suck. Besides, I'd argue it's AMD's job to explain their RT implementation, not Microsoft's.Once again, nothing about RT as a feature is ever a main talking feature since console reveals. RT this coming gen for consoles are going to be underwhelming imo due to performance hits.

I will be very focused on stuff like reflections imo. Full RT is not happening. It's probably gonna be the selling point of Pro versions.Once again, nothing about RT as a feature is ever a main talking feature since console reveals. RT this coming gen for consoles are going to be underwhelming imo due to performance hits.

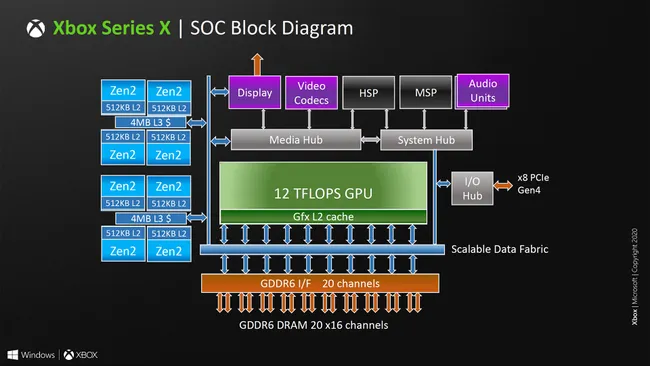

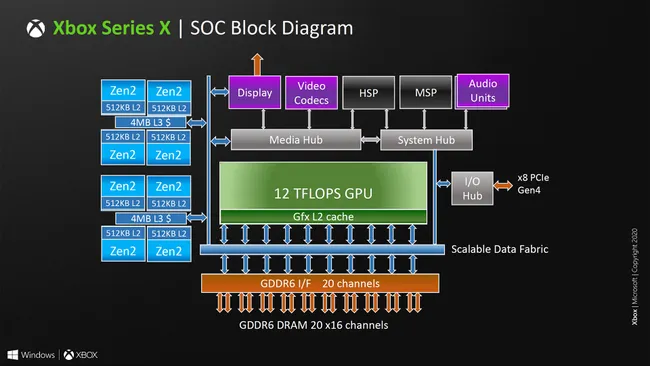

8MB L3 Cache...that's a lot less than a Ryzen3700X..I wonder what the performance cost will be.

www.hardwaretimes.com

www.hardwaretimes.com

$599APU, RAM, storage and probably cooling are more expensive than Xbox One X.

Once again, nothing about RT as a feature is ever a main talking feature since console reveals. RT this coming gen for consoles are going to be underwhelming imo due to performance hits.

Once again, nothing about RT as a feature is ever a main talking feature since console reveals. RT this coming gen for consoles are going to be underwhelming imo due to performance hits.

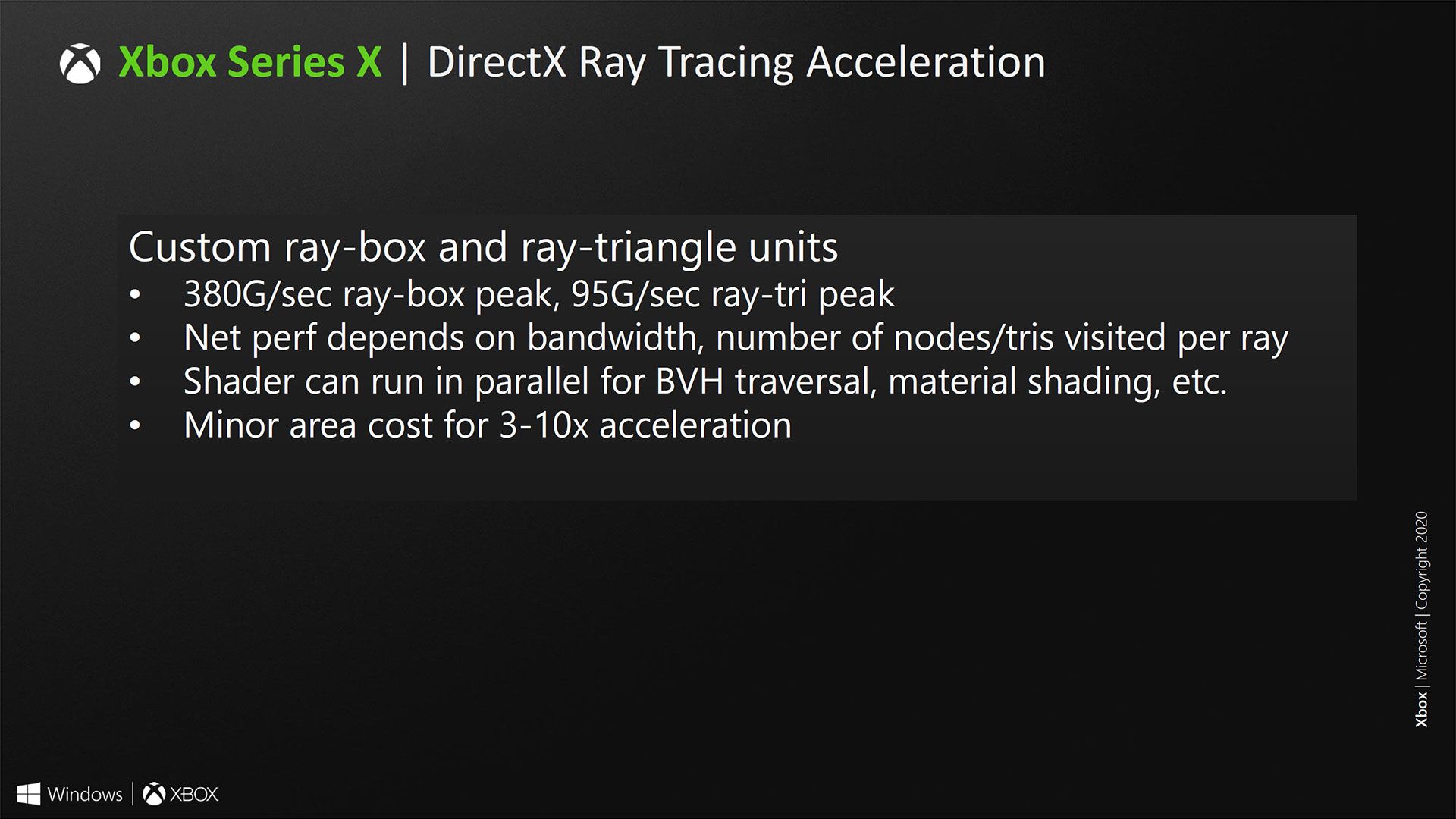

3 to 10% performance increase is nothing compared to what we've been led to believe, people have been comparing it to DLSS 2.0.

it says 3-10x, not %. the lack of dedicated hardware means that they'll have to give up some performance to use upscaling.3 to 10% performance increase is nothing compared to what we've been led to believe, people have been comparing it to DLSS 2.0.

I am not knowledgeable about this stuff at all, but the slide you quote says 3-10 times, not percent, correct?3 to 10% performance increase is nothing compared to what we've been led to believe, people have been comparing it to DLSS 2.0.

But it's 3 to 10 times and not 3 - 10% or am I reading the slide wrong3 to 10% performance increase is nothing compared to what we've been led to believe, people have been comparing it to DLSS 2.0.

I'm aware, the lack of dedicated hardware means that they'll have to give up some performance to use upscaling. it's been expected

3 to 10% performance increase is nothing compared to what we've been led to believe, people have been comparing it to DLSS 2.0.

It's times not percentage.3 to 10% performance increase is nothing compared to what we've been led to believe, people have been comparing it to DLSS 2.0.

3 to 10% performance increase is nothing compared to what we've been led to believe, people have been comparing it to DLSS 2.0.

3 to 10% performance increase is nothing compared to what we've been led to believe, people have been comparing it to DLSS 2.0.

similar, yes. the difference is that Nvidia is using dedicated hardware. so, theoretically, Nvidia's method would always outperform the Xbox's solutionAs something of a tech luddite - would this be something similar to NVIDIA's solution for RTX GPUs? It makes sense that both Sony and MS (as well as AMD) would have something in the works like this.

3-10x.3 to 10% performance increase is nothing compared to what we've been led to believe, people have been comparing it to DLSS 2.0.

looks like that patent from a ways back has been put into practiceAs an insight into RDNA2 and RT behaviour, slide 13 sort of makes it sound like texture and RT ops are shared? Using one eats into the other? I guess it doesn't matter so much where you're memory bound anyway.

3 to 10% performance increase is nothing compared to what we've been led to believe, people have been comparing it to DLSS 2.0.