Sony and Microsoft aren't talking teraflops yet for their next-gen consoles - and we try to explain why. What if we were to tell you that a teraflop of Navi compute produces much more performance than a teraflop from a PS4 or Xbox One? Just how much does Navi bring to the table in terms of extra performance at the architectural level? Rich attempts to find out.

-

Ever wanted an RSS feed of all your favorite gaming news sites? Go check out our new Gaming Headlines feed! Read more about it here.

-

We have made minor adjustments to how the search bar works on ResetEra. You can read about the changes here.

Navi RDNA vs GCN 1.0: Last-Gen vs Next-Gen GPU Tech Head-To-Head! | Digital Foundry

- Thread starter Equanimity

- Start date

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

This is a really curious analysis for me, as I'm a former owner of a 280X and a current owner of a RX 570 (I only got the 570 because the previous card died and it also had some really ridiculous bundled games promo).

There's something that Richard probably does not know about the 280X. At some point, newer driver updates made performance worse for this card. Not that it matters much for this video I guess but I still wanted to point it out. :P

There's something that Richard probably does not know about the 280X. At some point, newer driver updates made performance worse for this card. Not that it matters much for this video I guess but I still wanted to point it out. :P

The accompanying article:

Every time they've demoed that game it has been running at 30fps and 1080p. So take your conclusions from that.so is waiting for a full rdna amd gpu the way to go right now?

edit: specifically, i wanna max cyberpunk at 4k 60fps. will that likely be possible on any gpu?

Every time they've demoed that game it has been running at 30fps and 1080p. So take your conclusions from that.

Gamescom demo was running higher than 1080p "looked to be 4k" according to Digital Foundry.

It was apparently running on an RTX 2080 Ti.

That would be a good follow-up article/video. Those two cards have the same number of ROPs as well. The lower testing bandwidth may make that less relevant though. But it could be worth mentioning, and I got curious so...PS4 and XBO are GCN 1.1 though. It should be a R9 290 vs 5700 XT test.

According to DF and Techpowerup, the PS4 Pro has 64 ROPs, the base PS4 and Xbox One X has 32, and the Xbox One S has 16.

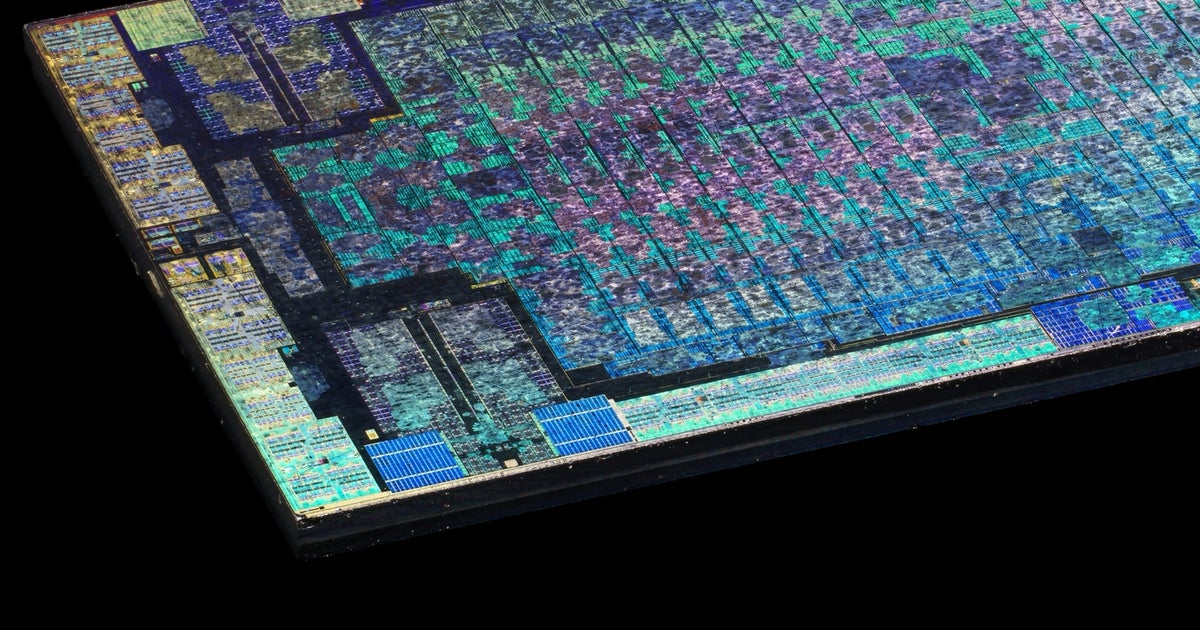

PS4 Pro and Xbox One X processors compared at the silicon level

We knew the spec many months before its launch and gained insights on its core design from its system architect, but on…

AMD Xbox One S GPU Specs

AMD Durango 2, 914 MHz, 768 Cores, 48 TMUs, 16 ROPs, 8192 MB DDR3, 1066 MHz, 256 bit

so is waiting for a full rdna amd gpu the way to go right now?

edit: specifically, i wanna max cyberpunk at 4k 60fps. will that likely be possible on any gpu?

We have no idea what will be possible. We can't possibly know until it comes out. With ray tracing features I can pretty much rule out 4k 60 on any card though.

It will be a huge jump visually. Once we get passed the cross gen, meh titles, visuals will blow away everything we currently have

Not really. GCN 1.0 is just old. GCN was an even bigger leap over Terascale than RDNA is over GCN.GCN had pretty bad efficiency when looking at TFlops, NAVI seems to have fixed that.

Wouldn`t get bent out of shape if the flop-count was lower than expected next gen.

Sure, but compared to competing cards in the last several years. Even newer versions of the architecture was outperformed Nvidia cards with much lower flop counts.Not really. GCN 1.0 is just old. GCN was an even bigger leap over Terascale than RDNA is over GCN.

To an extent, but nVidia tends to understate their power usage and flops. Their cards often boost to higher speeds than their AMD counterparts, even when listed at slower speeds.Sure, but compared to competing cards in the last several years. Even newer versions of the architecture was outperformed Nvidia cards with much lower flop counts.

Sure, but compared to competing cards in the last several years. Even newer versions of the architecture was outperformed Nvidia cards with much lower flop counts.

This isn't necessarily applicable to the consoles

Why wouldn't it be?

This is a really curious analysis for me, as I'm a former owner of a 280X and a current owner of a RX 570 (I only got the 570 because the previous card died and it also had some really ridiculous bundled games promo).

There's something that Richard probably does not know about the 280X. At some point, newer driver updates made performance worse for this card. Not that it matters much for this video I guess but I still wanted to point it out. :P

RX 570 is amazing, I keep parroting it. I got an 8GB one for 149 (129 if I'd bothered to send in the rebate) and for my needs, it fit the bill amazingly, and absolutely blows away anything else at that price. Hell even now, I notice it will play Gears 5 at ultra at 1440P (my monitor) at 30 FPS, and I dont mind 30 FPS. It also plays Destiny 2 at high at 60 FPS at 1440P. I just wanted something cheap to chuck in my computer for very basic gaming capability, instead it's actually competent! One downside is it's pretty loud, but I can accept that.

If I'd walked into a big box and bought Nvidia from Best Buy. I literally would have ended up with a 2GB GT1030 or something for the same price, LOL.

Sorry just saw another chance to gush over my 570 LOL.

The video...I mean yeah. About like I''d expect. Even "comparative" 14 TF though is only ~2X Xbox One X. You aren't getting any order of magnitude there. So something PS5/X2 can do at 4k, X1X can do at 1440P, basically, for reference. And as far as RAM, I'm not expecting X2/PS5 to go higher than 16GB there either, too expensive, so again they wont run away from the X.

Ignoring of course the vastly superior CPU's likely present in next gen. Also SSD optimizations will be exciting.

Because the devs are able to code specifically around the bottlenecks that hinder the gpus in the PC space

3080ti most likelyso is waiting for a full rdna amd gpu the way to go right now?

edit: specifically, i wanna max cyberpunk at 4k 60fps. will that likely be possible on any gpu?

Getting spoiled by these native 4k games on 1X right now, I wonder how it will go over actually having to drop res next gen to get Ray Tracing

I know both sides have said they will have dedicated allocations for Ray Tracing but that still isn't going to make the big perf requirement go away.

Still, wash me away next gen hype, I'm ready

I know both sides have said they will have dedicated allocations for Ray Tracing but that still isn't going to make the big perf requirement go away.

Still, wash me away next gen hype, I'm ready

Of course it's applicable to consoles. You can indeed code around weaknesses & play to the strengths of a GPU but this is mostly on the engine level and would transfer to the PC side aswell. It's why GCN does much better in current engines relative to Kepler. It's still mostly dependent on the engine that's being used because engines aren't really flexible in that you can just completly "code around bottlenecks"'.Because the devs are able to code specifically around the bottlenecks that hinder the gpus in the PC space

The only bottleneck that I can think of that would exist on PC and wouldn't on consoles are bad drivers, but considering that in most games the gap between consoles & AMD equivelant PC GPUs is pretty small or even nonexistent I'd say there isn't a whole lot left on the table in the PC space.

If you could code around any bottleneck and achieve maximum performance you'd want a console with a GPU stripped of all architectural improvements they added over the years with as much CUs as possible. You'd want GCN over Navi, you'd want Terascale over GCN, etc. Afterall in this case a 12-13Tflops Vega console would wipe the floor with a 8-9 Tflops Navi console. You'd sacrifice so much performance by going with Navi.

Of course it's applicable to consoles. You can indeed code around weaknesses & play to the strengths of a GPU but this is mostly on the engine level and would transfer to the PC side aswell. It's why GCN does much better in current engines relative to Kepler. It's still mostly dependent on the engine that's being used because engines aren't really flexible in that you can just completly "code around bottlenecks"'.

The only bottleneck that I can think of that would exist on PC and wouldn't on consoles are bad drivers, but considering that in most games the gap between consoles & AMD equivelant PC GPUs is pretty small or even nonexistent I'd say there isn't a whole lot left on the table in the PC space.

If you could code around any bottleneck and achieve maximum performance you'd want a console with a GPU stripped of all architectural improvements they added over the years with as much CUs as possible. You'd want GCN over Navi, you'd want Terascale over GCN, etc. Afterall in this case a 12-13Tflops Vega console would wipe the floor with a 8-9 Tflops Navi console. You'd sacrifice so much performance by going with Navi.

It transfers to the pc side only in more recent high end titles while being a near global application on consoles. Also youd be surprised just how limited pc devs are by APIs. If the consoles had a kepler GPU of a similar compute capability they wouldnt be any more powerful because of magic nvidia flops.