Who makes the Steam Deck GPU? Any possibility of something like this getting support on that?

it's an AMD GPU and besides this software tech is vendor agnostic so it's should theoretically work on any card even as far as 1080ti's

Who makes the Steam Deck GPU? Any possibility of something like this getting support on that?

That's pretty exciting!!!it's an AMD GPU and besides this software tech is vendor agnostic so it's should theoretically work on any card even as far as 1080ti's

It was my understanding that doing both simultaneously is costly. As resources are spent to achieve either method. I know that both can be done together, there isn't a technical reason they can't. I was just of the impression that its usually one or the other. Or primarily one and the otherused as a fail-safe if both are used in an engine.Dynamic resolution on top of temporal reconstruction is actually pretty common, and an excellent way to do things. For example, both UE4's Temporal Resolution (used in Gears 5), and Unreal 5's TSR (Used in the Matrix Awakens demo), have both temporal image reconstruction and dynamic resolution. It's basically how Gears 5 was able to run at a temporally reconstructed 4K, even though its internal res likely constantly scaled between 1440p-4k. I believe the Matrix awakens dynamically scaled between 1080p-1620p or something close to that on PS5/Series X.

In my understand, it's clearly a superior way to do things on console. For example, when a dev targets a fixed resolution, they tend to leave performance on the table in order to achieve a consistent framerate. As much as a dev tries, they very likely aren't going to be able to pack every inch of the game world with the same demanding amount of detail. So with a dynamic temporal solution, less demanding areas could theoretically run at full native 4K , while very demanding scenes (like intense battle and set pieces), might dip between quality mode and performance mode resolutions. The brilliance of which, is that the temporal reconstruction would keep all scenarios upscaling to 4K, and hopefully mitigate as much differentiation as possible.

Certain games like DLSSIs FSR an all games type of thing or just certain games like dlss

Marketing rights maybe. They didn't say ps5 can't do it.

Certain bc it requires implementation for the motion vector aspect. Fsr 1.0 is now called RSR and is driver level on pc and can be truly applied to any game with no developer necessary.Is FSR an all games type of thing or just certain games like dlss

This is us getting ahead of ourselves and not taking things into context.I'm hoping this leads to even better RT implementation with performance and resolution intact!

This is exactly what I hoped for!

The new consoles already are amazing as is, this will benefit them even more. I really don't see the need for midgen upgrades now (I barely did before).

I'm not the biggest fan of the console but this will also do wonders for the XSS' general resolution and RT implementation, I imagine.

Is FSR an all games type of thing or just certain games like dlss

The Coalition is an Unreal studio and will very highly likely just use TSR as they have demoed with Alpha Point.I imagine The Coalition are going to do wonderful things for Xbox platform for all studios with this.

Which DLSS does.This is us getting ahead of ourselves and not taking things into context.

As is, the new consoles are also adopting various rez gimmicks. Be that DRS or upsampling. This is just one more upsampling method to add to a long list of currently employed methods. This is not doing anything that is not already being done in some shape or form. At the end of the day, this is still just reconstruction.

Here are the facts. Running at native 1440p and outputting at that resolution, has a render cost less than running internally at 1440p and upsampling to 4k using any upsampling method. You add the cost of upsampling on top of whatever native rez render cost is being used. Where this tech can shine, or tech like these is if allowing you to render internally at say 1080p or 1252p and then this samples it up to 4k convincingly. But at a render cost that is say on par with you just running natively at 1440p. So your render cost s equivalent t 1440p, but your IQ is equivalent to say 1800p or 2000p.

But none of this means anything until we can see just what it costs. If it costs too much, then devs would opt for cheaper methods. That is what we should be looking at.

Wouldn't supporting am open standard be better?The Coalition is an Unreal studio and will very highly likely just use TSR as they have demoed with Alpha Point.

FSR 1.0 was also marketed only with Xbox in mind.

Sure.Probably for the same reason PS5 doesn't have VRR yet even after almost 18 months of Xbox having it. Sony just seem to be way behind in the features department compared to MS atm

We're just going off of what AMD is claiming right now, which is that it achieves similar results & performance impact as DLSS 2.0+. If that's the case, this is going to be a really big leap forward on consoles.This is us getting ahead of ourselves and not taking things into context.

As is, the new consoles are also adopting various rez gimmicks. Be that DRS or upsampling. This is just one more upsampling method to add to a long list of currently employed methods. This is not doing anything that is not already being done in some shape or form. At the end of the day, this is still just reconstruction.

Here are the facts. Running at native 1440p and outputting at that resolution, has a render cost less than running internally at 1440p and upsampling to 4k using any upsampling method. You add the cost of upsampling on top of whatever native rez render cost is being used. Where this tech can shine, or tech like these is if allowing you to render internally at say 1080p or 1252p and then this samples it up to 4k convincingly. But at a render cost that is say on par with you just running natively at 1440p. So your render cost s equivalent t 1440p, but your IQ is equivalent to say 1800p or 2000p.

But none of this means anything until we can see just what it costs. If it costs too much, then devs would opt for cheaper methods. That is what we should be looking at.

No, not necessarily. Unreal engine games can use whatever they want but it's likely tsr will be superior and it's baked in, so…

This.FSR 1.0 was announced coming to xbox first like how it is now but showed up on actual PS5 game first incase if anyone was wondering.

FSR 1.0 was also marketed only with Xbox in mind.

First game with FSR 1.0 was on PS5 ;)

Sure.

Thats why first game with FSR 1.0 which was also marketed with xbox, not PlayStation came first on PS5.

VRR is overdue, but cut those bs…

My god dude, give it up lol. You don't need to reiterate the same thing 3 times in 3 separate posts in the same thread over the span of 2 minutes. We get it.

Some engines (like insomniacs one) have already temporal upscaling solution. FSR is for those who have not their own propietary techWe're just going off of what AMD is claiming right now, which is that it achieves similar results & performance impact as DLSS 2.0+. If that's the case, this is going to be a really big leap forward on consoles.

SorryMy god dude, give it up lol. You don't need to reiterate the same thing 3 times in 3 separate posts in the same thread over the span of 2 minutes. We get it.

Are there temporal reconstruction techniques that DONT also provide AA (at least as an option)?

I thought it was inherent to the technique...

I would assume adding even more AA on-top of a temporal solution would be common sense if devs found their games still suffered from shimmering, but outside of TSR, I don't know of any that do.

Which DLSS does.

This seems to be getting us similar results. So no, I'm not getting ahead of myself - Again, if it's as good as we're all hoping that it is.

I don't know why you felt the need to mention that other devs already use reconstruction techniques... Because... I never suggested they didn't?

This just seems to finally rival what Nvidia is doing with DLSS. Let's hope it does!

I am not knocking the tech, or what it may or may not mean. I am just slightly skeptical and simply waiting for more information before we start hoping this up to be the next best thing for gaming. Again, we should see what it actually costs to implement, and what kinda results those costs give us.We're just going off of what AMD is claiming right now, which is that it achieves similar results & performance impact as DLSS 2.0+. If that's the case, this is going to be a really big leap forward on consoles.

From The Verge article, this is what AMD claims:I am not knocking the tech, or what it may or may not mean. I am just slightly skeptical and simply waiting for more information before we start hoping this up to be the next best thing for gaming. Again, we should see what it actually costs to implement, and what kinda results those costs give us.

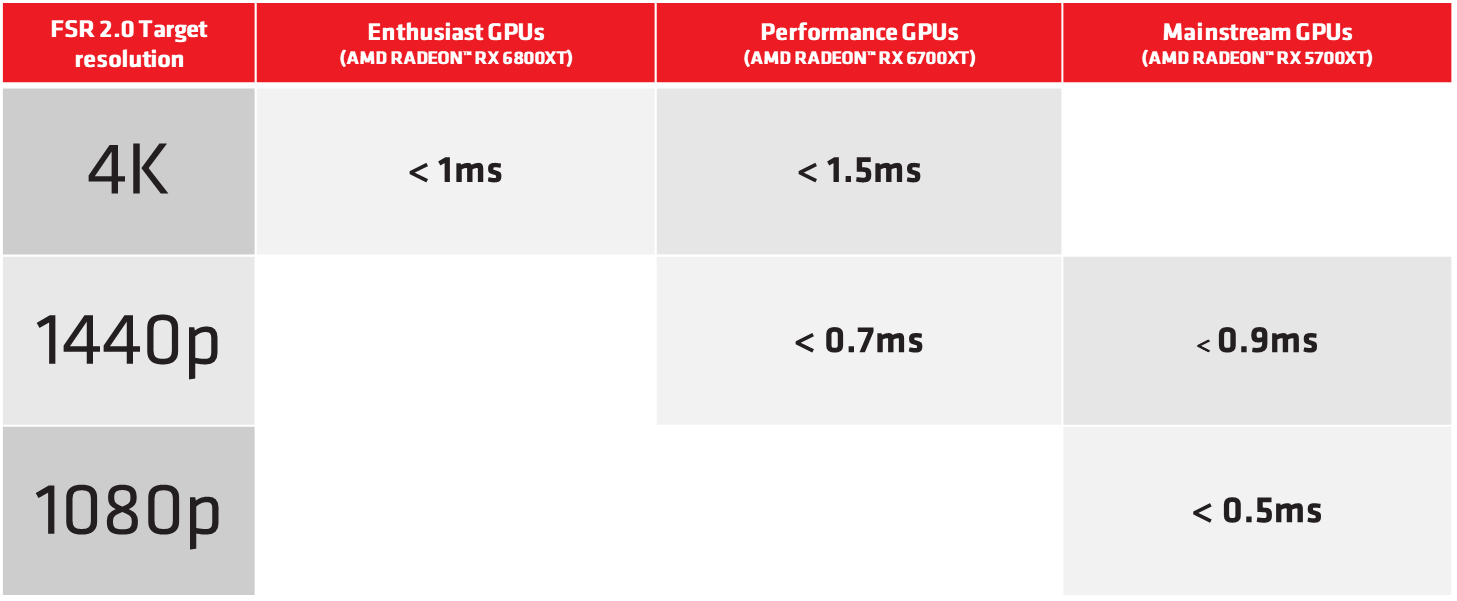

For reference, DLSS on a 2070s (closest approximation to both a PS5/XSX) has a render cost of ~2.1ms for a 4K output. And that s with dedicated ML hardware.

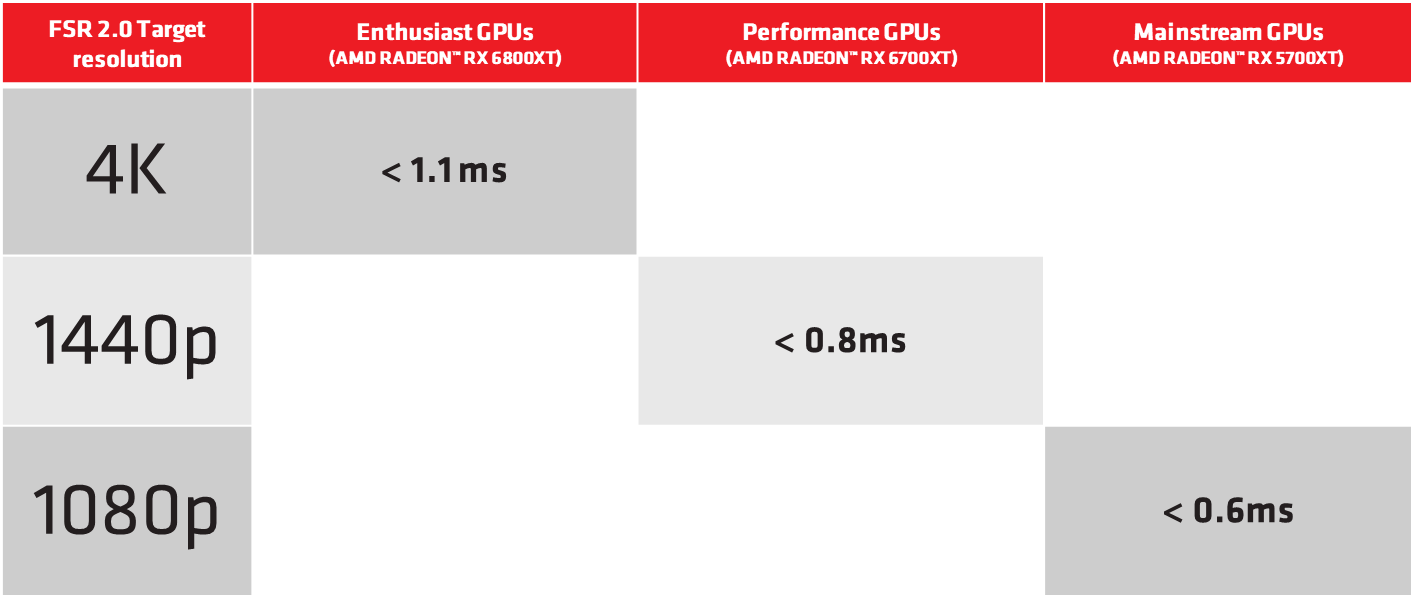

The Verge said:While the FSR 2.0 algorithm is remarkably fast — under 1.5ms in all of AMD's examples — it still takes time to run, and it takes more time on lower-end GPUs where AMD freely admits that some of its optimizations don't work quite as well.

Note that even dlss isn't able to really match the native iq at a 4x (1080p -> 4k) upscale, so hopefully people are keeping their expectations in checkThis is us getting ahead of ourselves and not taking things into context.

As is, the new consoles are also adopting various rez gimmicks. Be that DRS or upsampling. This is just one more upsampling method to add to a long list of currently employed methods. This is not doing anything that is not already being done in some shape or form. At the end of the day, this is still just reconstruction.

Here are the facts. Running at native 1440p and outputting at that resolution, has a render cost less than running internally at 1440p and upsampling to 4k using any upsampling method. You add the cost of upsampling on top of whatever native rez render cost is being used. Where this tech can shine, or tech like these is if allowing you to render internally at say 1080p or 1252p and then this samples it up to 4k convincingly. But at a render cost that is say on par with you just running natively at 1440p. So your render cost s equivalent t 1440p, but your IQ is equivalent to say 1800p or 2000p.

But none of this means anything until we can see just what it costs. If it costs too much, then devs would opt for cheaper methods. That is what we should be looking at.

Going to be interesting to see if Deathloop includes FSR 2.0 when it launches on Xbox later this year.Deathloop will likely be the first 3 way comparison between pre FSR 2.0, post FSR 2.0 (IQ or Performance uplifts if any) on Xbox and maybe PS5, and an additional DLSS comparison on PC. That won't be until September though

In what context? I highly doubt Alex said that FSR 2.0 is more like CBR.Watching the DF direct today Alex Battaglia said it's more like a checkerboard rendering so I wouldn't be surprised if it didn't.

I don't see why it wouldnt tbhGoing to be interesting to see if Deathloop includes FSR 2.0 when it launches on Xbox later this year.

TAA and temporal reconstruction are not very different. In fact at the back end they're usually the same thing, the difference being whether the input resolution is the same as the output resolution or not. Specific algorithms will differ, but specific TAA algrithms and specific TAAu algorithms also differ from each other quite a lot in the finer details, so please understand I'm speaking on a fairly high level here.

1440p -> 4k temporal reconstruction vs 4k native TAA differs on how many samples they take per frame, but in both cases they accumulate samples across several frames to produce very high resolution images, which are then down-sampled to the final display. They might accumulate samples across 4, 8 or 16 frames, which implies an extremely large sample count, internally creating an image that may be somewhere in the region of 4x or 8x supersampled, which is then down-scaled to the target output 4k display. The anti-aliasing effect of TAA is produced by this intermediate step where you create this very high resolution image. But even if you started at only 1440p equivalent samples per frame, the intermediate image before output to the screen is still much higher res than it would have been rendering at native res without any form of AA - so it's still an "Anti aliased image".

Note here, that early work on TAA referred to the technique as "temporally-ammortized supersampling". I hope based on the above description that makes sense why they would have called it that, and how temporal upsampling / reconstruction is, conceptually, just a slight variation on TAA itself.

Now, regarding AA, yes they might also other techniques on top of this temporal stuff at some stage of the pipeline. It's not uncommon to use cheap post-process AA like FXAA at some stage of the image, to further mitigate some kinds of aliasing beyond that which the TAA suppresses, and (critically) to provide "good enough" anti aliasing coverage such that things which disrupt the TAA don't result in a momentarily completely raw image. Examples of things like that would be camera cuts - the algorithm needs to throw out the accumulated data when a camera cut occurs because the data of the old image has no bearing on the new one and if they kept it, the image would become smoother but dreadfully smeared / ghosted with the old image. Thus, in rejecting the data to prevent that, you wind up with 1 frame with no AA at all otherwise, which then gradually builds up as each new frame is generated. The FXAA isn't as good as TAA, but when it only needs to cover 2-3 frames before the TAA can start looking good again, that's usually not perceptually noticeable for users. A specific concrete example of games that do this is Resident Evil 2 / 3 Remake, but there are many more than that. The CoD games have been using a technique they refer to as "Flimic SMAA" which blends the SMAA algorithm with temporal accumulation to achieve their total solution. Sometimes in a menu you'll see a CryEngine game have options like "SMAA T2X" or whatever which is also similar.

In what context? I highly doubt Alex said that FSR 2.0 is more like CBR.

You're good haha! Hope you feel better.Let me have a look at it again.

Edit: disregard, he said it's basically a version TAAU. That's what happens when you're lying in bed with COVID and your head is spinning 🤒

AMD GPUOpen said:The FidelityFX Super Resolution 2.0 analytical approach can provide advantages compared to ML solutions, such as more control to cater to a range of different scenarios, and a better ability to optimize.

Kind of sounds like it. Maybe able to manually tweak the Algorithm on a scene-by-scene basis if needed?This specific quote makes me wonder if AMD is implying that there can be some hand optimization by the developer on a game by game basis to help cover any image quality gap that ML might have?

This has nothing to do with Sony, it's an open standard that anyone can use. And regardless, Sony is not used to quickly publically announce what's in or is not in their console SDK.

Given how most games just use the default dlss sharpening options, I'm a bit skeptical that many devs would spend that much time hyper optimizing their settings on a power scene basisKind of sounds like it. Maybe able to manually tweak the Algorithm on a scene-by-scene basis if needed?

Exactly.This has nothing to do with Sony, it's an open standard that anyone can use. And regardless, Sony is not used to quickly publically announce what's in or is not in their console SDK.

Situation is same as with FSR 1.0. If devs want it, they can add it.