You need to look at GPU utilization.

It's certainly

possible that you could be GPU-bottlenecked if you're playing games on ultra settings at high resolutions, but even then I'd be surprised if you aren't running into CPU limitations quite often.

Since I wrote a post about CPU bottlenecks the other day, I'll post it here verbatim:

If your GPU is not at 95% or higher (ideally 99%) at all times it is generally an indication that your CPU is bottlenecking the GPU.

It's rare for the CPU to ever be at 100% across all cores -or even a single core- even if it is the bottleneck. It does sometimes happen when playing demanding games that can scale to more cores than the CPU has though.

I often use

Deus Ex: Mankind Divided to illustrate these issues, as it's one of the few games which

would completely max out my old i5-2500K CPU - 100% usage on all four cores.

I don't have full screenshots for all of the examples, only stats, but it still illustrates the point.

This is the most clear example there could be of being CPU-limited, as the scene remains at 43 FPS no matter what graphical settings were used, and upgrading the GPU did nothing but lower GPU utilization.

The faster GPU can use higher quality graphics settings, but cannot go above 43 FPS because that's the limit of what the CPU can handle.

Technically there is a

very slight GPU bottleneck introduced with the high quality settings, as it dropped from 43.7 FPS to 43.3 FPS.

In another test I adjust the i5-2500K's clock speed.

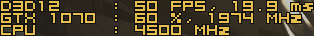

At 4.5 GHz the game runs at 50 FPS with 60% GPU utilization:

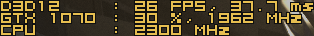

Dropping it to 2.3 GHz cuts the frame rate by an equal amount: going from 50 FPS to only 26 FPS, and dropping GPU utilization from 60% to 30%:

So we know that the GPU is being bottlenecked by the CPU here - and we can calculate just how much from this.

If it's running at 50 FPS with 60% utilization, it should run at 83 FPS with 100% utilization; but that would require the CPU to run at ~7.5 GHz.

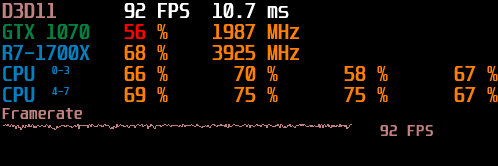

After upgrading the CPU to a much faster 8-core Ryzen 1700X the game runs much better in the same scene.

These results are not

directly comparable though, as I had found that DX11 performed better than DX12 once it was not as CPU-limited, and the graphical settings may not have been an exact match.

But the point is that upgrading the CPU now let that scene run at over 90 FPS using the same GTX 1070, while the best that could be achieved with the i5-2500K was only 50 FPS.

However, you can see that since the graphical settings were lowered further, the GPU is still only working at 56% here.

Even though the most-worked core on the CPU is only at 75% utilization, the GPU is still being held back and a faster CPU should still improve performance.

That's why you can't look at CPU utilization to judge whether or not it's a bottleneck. It may not always be at 100% even when it's holding things back.

You need to be looking at

GPU utilization to see whether it's being bottlenecked by the CPU or not.

And it's very easy to see when the GPU is the limiting factor for performance - it will be at 99% utilization.

You can often lower graphical settings to reduce the work placed on the GPU and improve performance though. Few settings in games other than draw distance will reduce a CPU bottleneck.

I've always been hesitant to OC because I wanted the CPU to last (and it has, literally 10 years running)...the main reason I bought that 980X Extreme CPU for $1000 was because I wanted the fastest frequencies and I wanted the CPU to last for at least 5 years

My 2500K has been overclocked from 3.3 GHz to 4.5 GHz for the past nine years without issues.

So long as it's adequately cooled and you stay within reasonable core voltage ranges, overclocking should not meaningfully impact CPU lifespan or stability.

so as far as my 980X would the 9700K be a worthwhile upgrade?...I want to buy something above what I may need today so that it'll last me another 8-10 years...I hear the new 10000 chips aren't worth it especially with Intel planning on releasing another round of 14nm CPU's at the end of the year (Rocket Lake-S)...I'd really prefer to wait for Intel's 10nm chips but apparently that's not coming until 2021

You are generally better off spending less money and upgrading more often than spending a lot of money now and expecting it to last a decade.

But these CPUs are also far less expensive than your 980X to begin with.

so no chance Intel's upcoming Rocket Lake-S chips will beat AMD's 3000/4000 series?...Intel's only hope of getting back in the game will be 10nm?

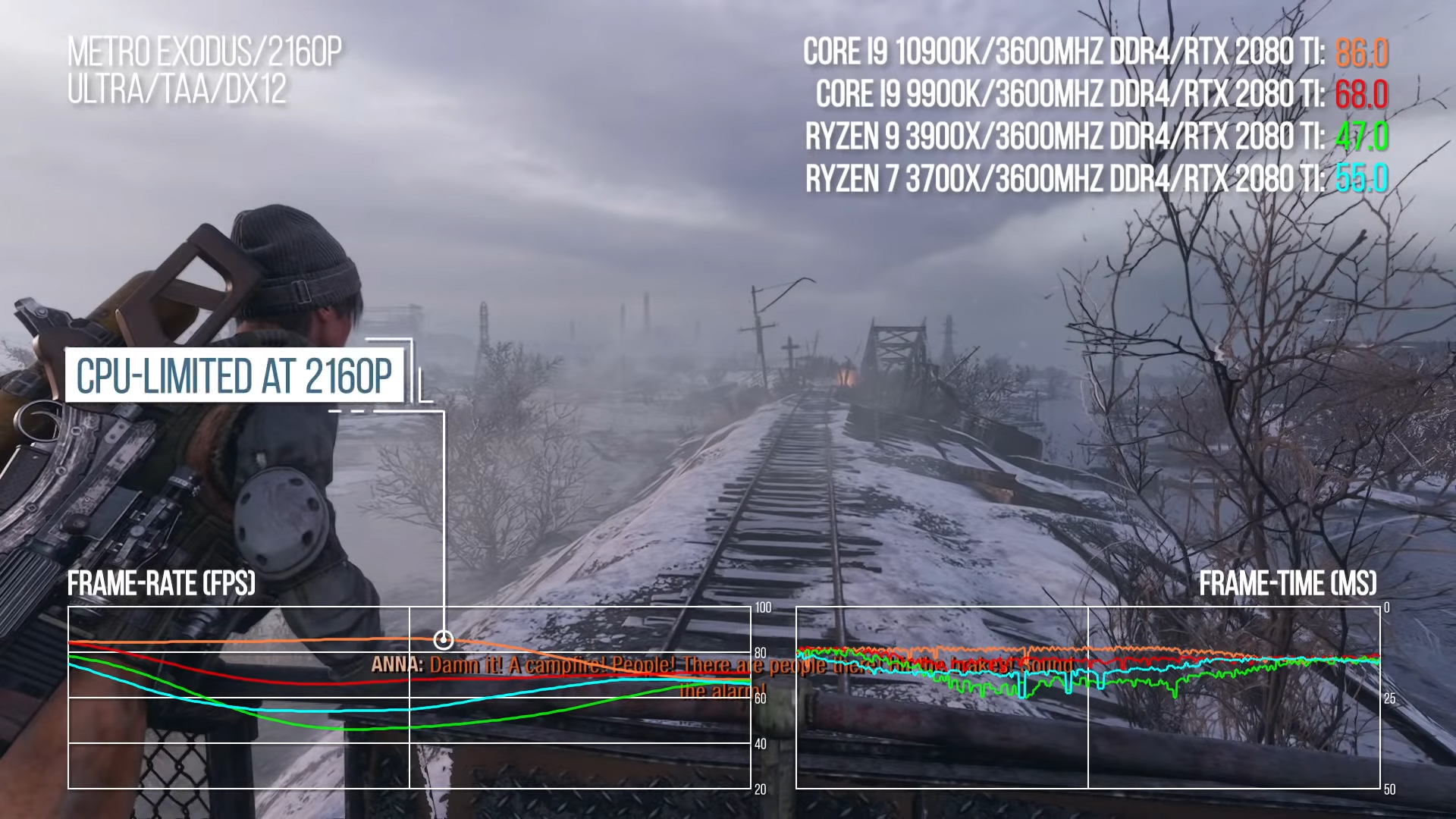

A lot of people like to push the idea that CPU doesn't matter at high resolutions, or that AMD is close to Intel in gaming performance.

Intel provides a far more consistent experience in games than AMD.

The 4000-series CPUs should hopefully improve upon this a lot with the move from 4-core CCXes to 8-core CCXes and improved IPC, but no-one can say how they will perform until they're tested.

And even then, a

lot of places testing CPU performance do inadequate testing and only look at averages (where AMD might look fine) or post percentile numbers without context.

The 9700K is a $375 CPU -

https://www.amazon.com/Intel-Core-i7-9700K-Procesador-desbloqueado/dp/B07HHN6KBZ

You might as well get 3900X for basically $30 more at that point.

https://www.amazon.com/AMD-Ryzen-3900X-24-Thread-Processor/dp/B07SXMZLP9

I have a 9900K myself have some minor buyers regret for not holding out for the Zen 2 chips after them blowing up Intel at Computex last year.

The 3900X is much worse for gaming than a 9700K - though I'd always recommend buying the latest generation of CPU (10700K) even if the difference seems minor. Prices may be discounted but MSRP for the 9700K and 10700K are the same, while the 10700K has hyperthreading (8c8t vs 8c16t).

The 12-core 3900X is really hurt by the 4x3-core CCX design - it tends to perform worse in most games than the 8-core 3700X with its 2x4-core CCX design (see above).

Higher core-count AMD CPUs like the 3900X are good for 'offline' tasks like batch-processing or rendering, but not as good as Intel CPUs for real-time tasks like gaming or editing.

Frametime is directly proportional to framerate. It's just another way of expressing the same thing.

The main reason it used to be "different" is that monitoring tools used to average frame rate over 100-1000ms, but not frame time.

The option to disable averaging and have RTSS update its stats every frame has been there for a long time now though.