I will always disable or mod out chromatic aberration, vignetting, lens flare, and screen effects like a 'dirty lens' when possible.

Film grain generally doesn't bother me, but it depends on the implementation.

In

Resident Evil 2, the 'film noise' option is used to dither the output, so disabling it severely reduces image quality.

The output would ideally be dithered regardless of that setting, but I can see why it would be done in a single processing step.

There's a lot of banding/posterization in the shadows with the film noise option disabled (the image was brightened to make the difference more obvious).

Some games manage to implement film grain in a way that does nothing to improve image quality, and only adds noise on top of an image with banding, which does little to help.

I've noticed some games implementing sharpness lately (Anthem, Division 2) thankfully you can turn it off, but why, why, why is it on in the first place.

Post-process temporal anti-aliasing techniques blur the image, especially at lower resolutions, so post-TAA sharpening is required to produce a sharp output.

Ideally it will be a slider as seen in Ubisoft games, rather than a toggle. Many other games don't even give you the option and either force it on or don't sharpen the image at all.

Is CA as bad as the bloom effect was? God I hated bloom.

Also, what is CA?

I think Fable may have been the worst offender for over-use of bloom lighting. "Migraines in 60 seconds or less, or we'll give you your money back!"

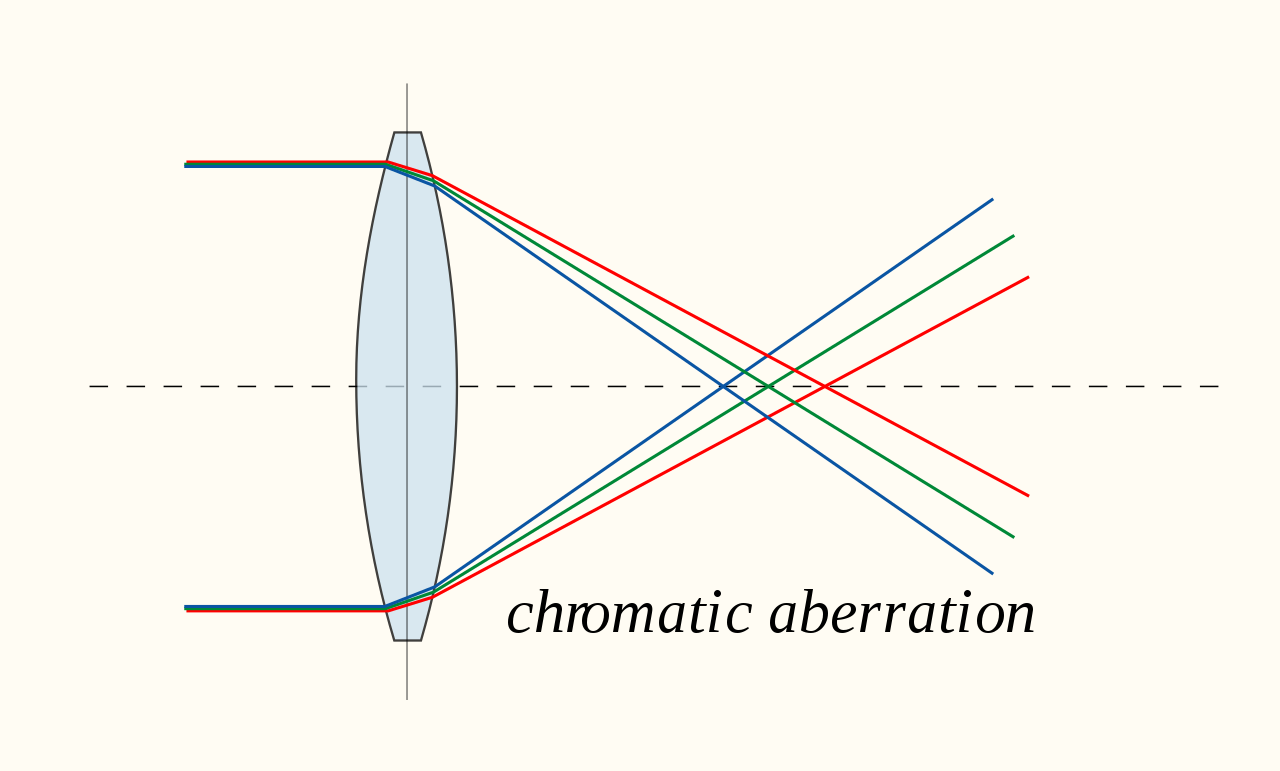

Chromatic aberration simulates low-quality lenses which cannot focus all wavelengths of light to the same point.

Photographers and film makers spend a lot of money on lenses to avoid this artifact.

Chromatic aberration from a simple, low-quality lens design:

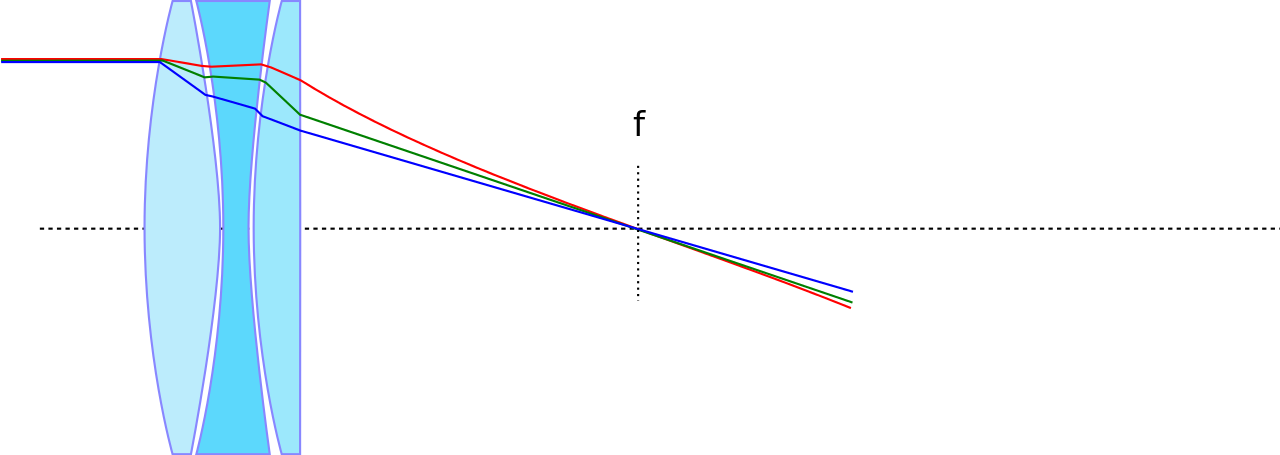

A high quality, complex Apochromatic lens design focuses visible wavelengths to a single point (the film plane or digital sensor):