Bloodborne justifies a PS4 purchase by itself. TLOU is amazing as well.I'm getting a PS4 with TLOU and Bloodborne next week. You guys make me wanna cancel my order, why you do this.

Fast, someone tell me that TLOU is the best thing that has ever happened in video gaming.

-

Ever wanted an RSS feed of all your favorite gaming news sites? Go check out our new Gaming Headlines feed! Read more about it here.

-

We have made minor adjustments to how the search bar works on ResetEra. You can read about the changes here.

PC Gaming Era | July 2018 - Where the Duffs are Whales and the Slashes are Grizzlies

- Thread starter MRORANGE

- Start date

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

- Status

- Not open for further replies.

I'm getting a PS4 with TLOU and Bloodborne next week. You guys make me wanna cancel my order, why you do this.

Fast, someone tell me that TLOU is the best thing that has ever happened in video gaming.

Bloodborne justifies a PS4 purchase by itself. TLOU is amazing as well.

This. My PS4 is basically a bloodborne machine. Very worth it.

I have yet to play TLOU, but yes. You should definitely buy and play GoW. First game I played on my PS4 as well :DGet Dad of Boy instead, really solid Action RPG IMO. You could also give Horizon a play, flinging arrows that blow up robot (dinos) can be fun just for that ;D

In the past month or so I've played through TLAOU, Uncharted 1 and 2, and just started UC3 on PS4 and it's been very good. I've enjoyed those more than the newer open world games like Horizon and GoW (linear with OW-elements).

Edit: lol, top of the page. Yes, this is the PC community thread.

Edit: lol, top of the page. Yes, this is the PC community thread.

I really need to catch up on my Atelier games on vita.

Still whatever that KT doesn't like putting the series on sale for usually no more than 40% off.

Still whatever that KT doesn't like putting the series on sale for usually no more than 40% off.

Alright, it is settled then. Getting the GoW Bundle with TLOU and Bloodborne. Thanks fam

Kinda crazy that GoW will be my first 2018 released game I play. My GOTY list is going to be rather short.

Kinda crazy that GoW will be my first 2018 released game I play. My GOTY list is going to be rather short.

Is this Superhot Roguelike edition?

I need to Platinum Bloodborne sometime. Game of the generation. Looking forward to FromSofts next Playstation project, but it will probably be on PS5.

Btw, if you have a PS3 you should get the GoW collection. They are amazing games. Not long either.

Alright, it is settled then. Getting the GoW Bundle with TLOU and Bloodborne. Thanks fam

Kinda crazy that GoW will be my first 2018 released game I play. My GOTY list is going to be rather short.

I need to Platinum Bloodborne sometime. Game of the generation. Looking forward to FromSofts next Playstation project, but it will probably be on PS5.

Btw, if you have a PS3 you should get the GoW collection. They are amazing games. Not long either.

Good choice. Bloodborne very quickly rose to the top of my favourite lists since it shares a lot of elements that I like in Dark Souls 1 that the sequels didn't continue. The Last of Us isn't quite the life changing event that people have talked it up to be, especially not when played for the first time in 2018, but it is a very good game.

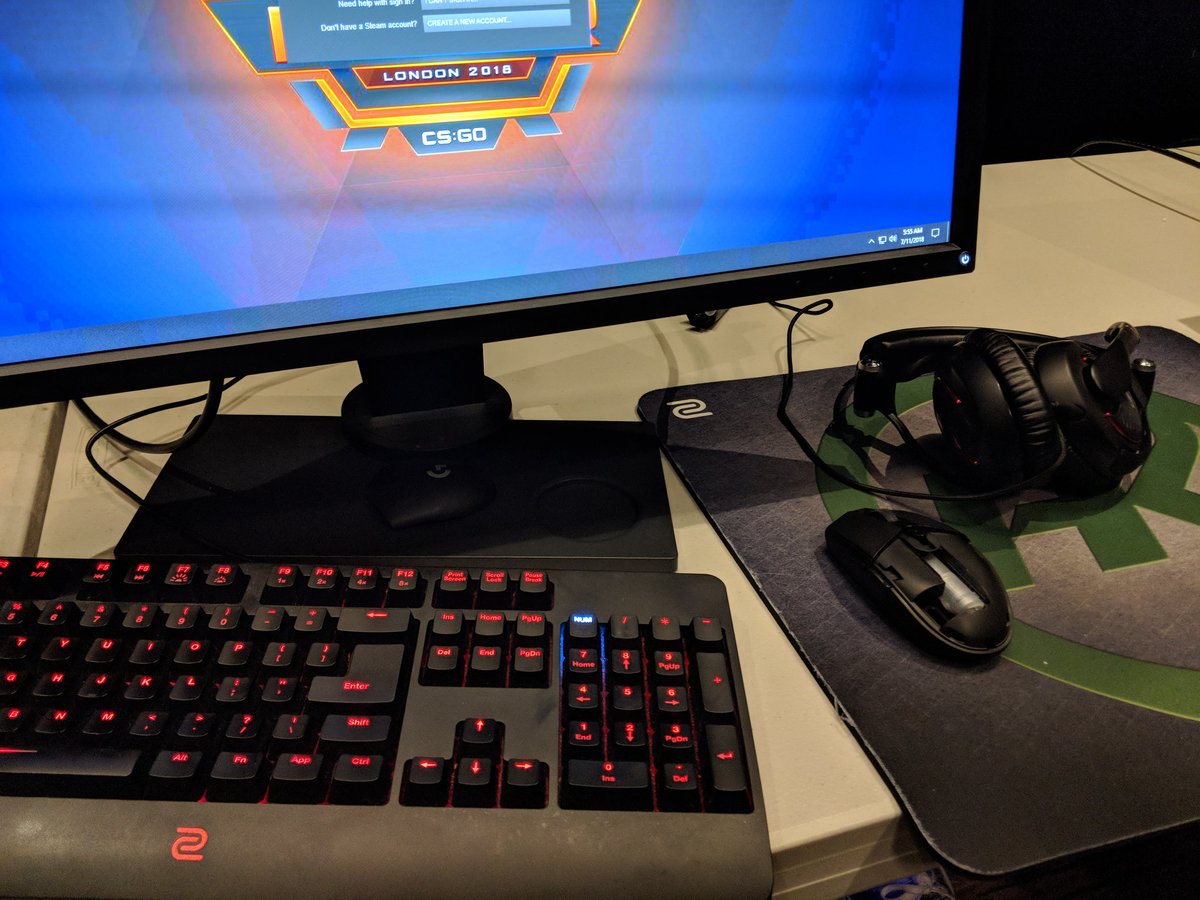

They're vastly inferior to the last two gens of Logitech mice. The new Logitech wireless ones use some black magic that makes them have lower input lag than wired mice. Literal black magic. I have a 2 gen old wired one that's still not been surpassed by zowie. Akronis had some issues with the current general build quality I think.Anyone has experience with the Zowie mices? I hear great things and wanted to get some feedback from here before I get serious and buy myself one.

Thanks!

I'm getting a PS4 with TLOU and Bloodborne next week. You guys make me wanna cancel my order, why you do this.

Fast, someone tell me that TLOU is the best thing that has ever happened in video gaming.

It's the best Sony game on PS3, don't @ me

TLoU is the greatest. dont listen to heathens and blasphemers!I'm getting a PS4 with TLOU and Bloodborne next week. You guys make me wanna cancel my order, why you do this.

Fast, someone tell me that TLOU is the best thing that has ever happened in video gaming.

Can you hook me up with a link with these mices that you speak of, good sir?They're vastly inferior to the last two gens of Logitech mice. The new Logitech wireless ones use some black magic that makes them have lower input lag than wired mice. Literal black magic. I have a 2 gen old wired one that's still not been surpassed by zowie. Akronis had some issues with the current general build quality I think.

Haven't played The Last of Us, but I really enjoyed the Uncharted games (1-3), but I can't say some of them "aged" well, since they did have some technical limitations that you could notice even back when they first came out. They aren't the bestest games ever but they definitely aren't "games that play itself" people on this site and gaf liked to say, that's a bunch of bullshit. The problem about "fanboy" culture is that people exaggerate things to the max.

Environments look alright, I just have issues with some of the character models like Orchid, they aren't very detailed close up, while some other ones do look fine. I think the only thing demanding is the particle effects

Environments look alright, I just have issues with some of the character models like Orchid, they aren't very detailed close up, while some other ones do look fine. I think the only thing demanding is the particle effects

Last edited:

I'm getting a PS4 with TLOU and Bloodborne next week. You guys make me wanna cancel my order, why you do this.

Fast, someone tell me that TLOU is the best thing that has ever happened in video gaming.

Oh Bloodborne is my least favourite FROM Souls-like too

Sorry xD

FYI if you own Superhot now you'll get Mind Control Delete for free when it leaves early access. So only buy it now if playing it a few months early is worth $10. And yeah, it looks like a roguelike expansion with classes and power-ups. Vanilla Superhot is a bit brief if you don't dive into the challenge stuff so the bigger better replayable expansion is looking pretty sweet.

Huh, why Orchid? She's one of the characters from the first season and I think her close-ups is one of the best in game, her moveset and animations are very good:Environments look alright, I just have issues with some of the character models like Orchid, they aren't very detailed close up, while some other ones do look fine. I think the only thing demanding is the particle effects

Some late characters from Season 3 are much worse, for example, Eagle and Shin Hisako have absolutely emotionless faces when they getting their ass beaten up during finishers, General RAAM has very weak and stiff moveset, and Fulgore is just trash.

FYI if you own Superhot now you'll get Mind Control Delete for free when it leaves early access. So only buy it now if playing it a few months early is worth $10. And yeah, it looks like a roguelike expansion with classes and power-ups. Vanilla Superhot is a bit brief if you don't dive into the challenge stuff so the bigger better replayable expansion is looking pretty sweet.

Oh, didn't know that, cool to get it for free.

https://imgur.com/a/G4CnYI6

https://www.reddit.com/r/MouseReview/comments/8uiewo/not_sure_if_the_pictures_been_posted_here_but/

Lots of streamers accidentally showing it on cam over the last couple weeks

Can you hook me up with a link with these mices that you speak of, good sir?

They would be the g703/603 and the g305. The g305 is particularly interesting because you can replace the battery with a AAA lithium battery inside of a plastic AAA>AA shell converter to get the weight down to 85-88 grams. Further if you don't mind the comfort, you can remove the plastic shell to get it around ~77 grams. Weight is one of the huge disadvantages of wireless gaming mice, and since most have internal batteries you can't really mod them in this way. The g305 is pretty popular lately because of this.

Worth mentioning they aren't actually faster than a wired connection, just that they are only 1ms slower and it isn't noticable. Having no cable is a great boon for aim.

That leaked mouse supports wireless as well. The NDA seems to expire on the 20th.

Here is a useful link: https://www.overclock.net/forum/375-mice/854100-gaming-mouse-sensor-list.html

You probably want to stick to MERCURY, HERO, 3389, 3360, 3366 and other variations like the one in the Rival 600. Anything based on the 3360 sensor (which all of the above except mercury and hero are). Mercury and Hero are Logitechs in-house low-power sensors, used in budget mice and their wireless mice. They aren't as good as the 3360 and its variants, but they are very close.

Last edited:

Huh, why Orchid? She's one of the characters from the first season and I think her close-ups is one of the best in game, her moveset and animations are very good:

Some late characters from Season 3 are much worse, for example, Eagle and Shin Hisako have absolutely emotionless faces when they getting their ass beaten up during finishers, General RAAM has very weak and stiff moveset, and Fulgore is just trash.

I think it's just her design that makes the low poly models stick out more than the others for me, and probably because she's the first character I used lol

and man the game looks nicer at higher res, but I can't 4k it with a 1060 gtx despite the game is barely using my gpu at 1080p, the particle effects tank my framerate at 4k

Look for ones with the HERO sensor. G603, G305Can you hook me up with a link with these mices that you speak of, good sir?

Not the Atelier game I wanted confirmed in the West.... :\

But is the framerate locked to 60 or not, that's the question. I don't even have 144hz monitor, but I'm curious anyway.

Parsnip

Bacon said "If you know an MTF game, you know the answer" when GhostTrick asked if the game has uncapped framerate, so I'm expecting above 60fps.

Don't think I ever had a game where I went faster from "this is kinda fun" to "Wow, this is frustrating and dumb" than Killer is Dead. The encounter design manages to become incredibly bloated and repetitive, at the fourth level already. Doesn't help that you basically can't regenerate health and are better of getting yourself killed and restarting a checkpoint with full health.

You gotta master the dodge counterDon't think I ever had a game where I went faster from "this is kinda fun" to "Wow, this is frustrating and dumb" than Killer is Dead. The encounter design manages to become incredibly bloated and repetitive, at the fourth level already. Doesn't help that you basically can't regenerate health and are better of getting yourself killed and restarting a checkpoint with full health.

The higher the atk counter is will give u the chance of enemies dropping upgrade points for health, blood or exp

So you use that to get some health back

At your stage i didnt have problems taking down enemies tbh

Those upgrade points don't regenerate health, though. I guess I could focus on dodging better, but the game just didn't hook me enough for me to put in that kind of time. This really isn't on the level of an Ys game, where you feel rewarded for beating the bosses. In fact, the two bosses I beat so far where the most boring shit ever. "Hard", sure, but as far as game design is concerned, it's really boring stuff. The dumb anime shenanigans (which I enjoyed!) aren't redeeming enough to continue this game, honestly.You gotta master the dodge counter

The higher the atk counter is will give u the chance of enemies dropping upgrade points for health, blood or exp

So you use that to get some health back

At your stage i didnt have problems taking down enemies tbh

Are you playing on Normal or Hard? Normal is doable and generally the game is pretty generous with the timing for the dodge action before you get attacked.Those upgrade points don't regenerate health, though. I guess I could focus on dodging better, but the game just didn't hook me enough for me to put in that kind of time. This really isn't on the level of an Ys game, where you feel rewarded for beating the bosses. In fact, the two bosses I beat so far where the most boring shit ever. "Hard", sure, but as far as game design is concerned, it's really boring stuff. The dumb anime shenanigans (which I enjoyed!) aren't redeeming enough to continue this game, honestly.

Oh and you have set the FPS and performance to 60 FPS or higher right? There's a guide in the steam community forums.

Hello, I am a bot! I come bearing 1 gifts from KainXVIII!

This is a raffle that will expire in 3 hours. The winner will be drawn at random! Any prizes leftover after the deadline will become available on a first-come first-serve basis.

How to Participate:

To enter this giveaway click here to send a private message to me, GiftBot, with the subject line entry.

In the message body, copy and paste the entire line below for the prize you wish to win. You are allowed only 1 entry per giveaway.

Available Prizes:

Spiritual Warfare & Wisdom Tree Collection -- GB-3af35f7c-f7ca-4275-850f-bb7180d0fcba - Won by Ganado

This is a raffle that will expire in 3 hours. The winner will be drawn at random! Any prizes leftover after the deadline will become available on a first-come first-serve basis.

How to Participate:

To enter this giveaway click here to send a private message to me, GiftBot, with the subject line entry.

In the message body, copy and paste the entire line below for the prize you wish to win. You are allowed only 1 entry per giveaway.

Available Prizes:

Last edited:

Are you playing on Normal or Hard? Normal is doable and generally the game is pretty generous with the timing for the dodge action before you get attacked.

Oh and you have set the FPS and performance to 60 FPS or higher right? There's a guide in the steam community forums.

yah I remember killer is dead being a relaxing dodge and hit game, I didn't have health issues playing on normal

I need to make a super good friendo who I can trust to remote connect to my desktop and ALT+F4 me anytime I boot up R6 Siege, because at this point, I'm never going to beat my backlog ever. -_-

Are you playing on Normal or Hard? Normal is doable and generally the game is pretty generous with the timing for the dodge action before you get attacked.

Oh and you have set the FPS and performance to 60 FPS or higher right? There's a guide in the steam community forums.

Didn't notice it was 30 FPS, it did feel somehow "off" to play though, so maybe that was it. I'll look at the guide, and focus on dodging. Let's give this thing another try!yah I remember killer is dead being a relaxing dodge and hit game, I didn't have health issues playing on normal

Keep in mind that upping the FPS to 60 or ever 120 will affect certain timings for QTEs, but you can also edit that as well to resolve it.Didn't notice it was 30 FPS, it did feel somehow "off" to play though, so maybe that was it. I'll look at the guide, and focus on dodging. Let's give this thing another try!

https://steamcommunity.com/app/2611...dding or Configuration&requiredtags[]=English

One more thing: You can also disable the HUD if you want by editting .ini files as well.

every sale we look at this game thinking it would be fun in co op but then always remember the co op person has to pick some gimped guy

i dont know much about the game really, but are they ever going to "fix" that? is there something in the game that prevents two people from picking two different characters? maybe co op just isnt an important feature to them

I had fun with KID, but the dreamy, blurry stuff was constantly tickling my motion sickness buttons.

Better than your favorite, pal.

I mean I'm hoping for it too, but since he didn't specifically confirm it, and the image from capcom blog showed 60, I'm not sure I'm as optimistic as you.Parsnip

Bacon said "If you know an MTF game, you know the answer" when GhostTrick asked if the game has uncapped framerate, so I'm expecting above 60fps.

I mean I'm hoping for it too, but since he didn't specifically confirm it, and the image from capcom blog showed 60, I'm not sure I'm as optimistic as you.

I'll be on the optimistic side :V

My eyes are probably just terrible, but I've been struggling to hit good framerates at 4k with games (this sucks as 4k is the entire reason I switched to PC gaming) so I just did comparisons between 1440p and 4k. Took screenshots and directly compared and... on some games I don't see any difference. Of course, if I zoom the screenshots in I can. But normal fullsize on screen, I can't. However some games there is a noticeable difference. Like I compared Tomb Raider 2013, and on Ultimate quality the 1440p and 4k look identical to me, however the 1440p runs at 60fps while 4k runs at 30fps. Yet on some other games, even with antialiasing, I notice some nasty jaggies at 1440p versus 4k. But I have also encountered games which have nasty aliasing even at 4k with the same antialiasing solution.

Is this a game engine thing?

Is this a game engine thing?

I loved that movie! Used to download hacker manuals pretending to know shit when in fact I couldn't even get around html without a cheat sheet.

The free updates for Gungeon always seem great, I wish I could "figure out" that game. I 100%'d Isaac but I can't make any headway into Gungeon for some reason. I just always feel so weak, like none of the weapons do any real damage.

I would say that TLOU is worth playing despite the mechanics all being relatively mediocre, the story and environments and everything else is quite good, and there's only a couple of parts of the game that really end up being tedious. I bought a PS4 mostly for the exclusives as well, and for me, GoW and Horizon alone were worth it (I also needed a BR player I guess), and I'm excited for Spider-Man: Arkham Manhattan.I'm getting a PS4 with TLOU and Bloodborne next week. You guys make me wanna cancel my order, why you do this.

Fast, someone tell me that TLOU is the best thing that has ever happened in video gaming.

- Status

- Not open for further replies.