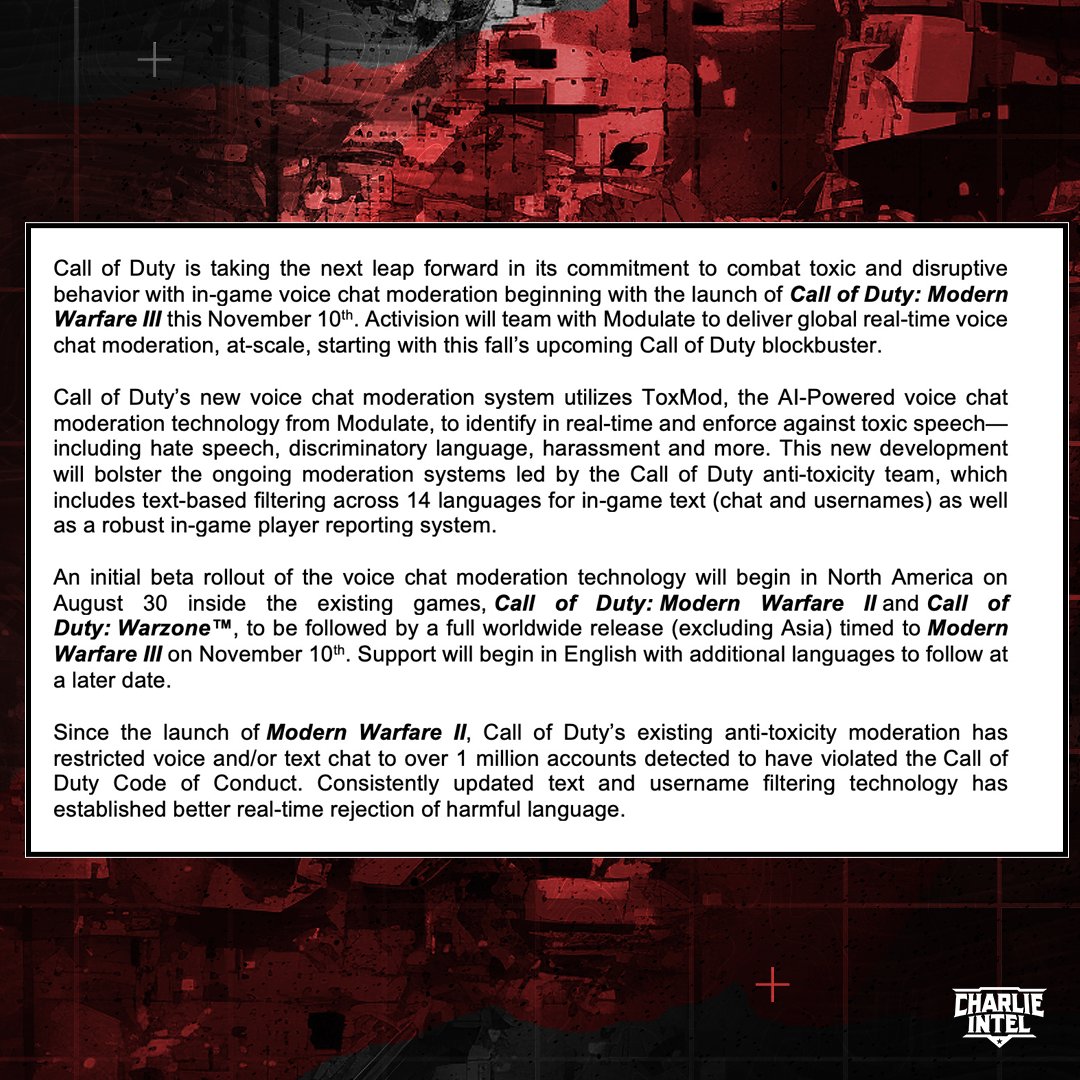

Activision will add real time in-game voice chat moderation in Call of Duty, AI powered detection - Live Today in MWII/WZ in NA, worldwide with MW3

- Thread starter Bishop89

- Start date

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

i guess that makes sense. It'd be impossible to have real mods in every game. And it says it flags things for actual review by real people

I swear to God I said <insert something that rhymes with some slur>!

Whenever video game accounts like Charlie intel use BREAKING esp in all caps for video games news, I just go like

Man, this isn't like a severe weather statement

It's call of duty news

Regardless this is good, hope it brings down hate speech

Man, this isn't like a severe weather statement

It's call of duty news

Regardless this is good, hope it brings down hate speech

Modulate

- English

- Spanish

- Portuguese

- French

- German

- Italian

- Polish

- Dutch

- Swedish

- Mandarin and Cantonese

- Japanese

- Korean

- Russian

- Turkish

- Hindi

- Bengali

- Arabic

- Filipino and Tagalog

Seems most are in beta (Spanish) or Alpha (everything non-English or Spanish)

Can you imagine only training AI off of COD voice chat?

*shudders*

If it's accurate enough I'm for it. It's just impossible to have humans do some tasks, moderating live chatter from millions of players is one of those impossible things.

Well it's training to detect speech and presumably "intent", not to generate anythingCan you imagine only training AI off of COD voice chat?

*shudders*

Now this would actually be incredible. People developing an entirely new language to get around moderation. There's already tons of gaming specific lingo and terms, might as well go all the way.everyone in COD about to create their own language. it'll be interesting how well this works

Lol, I hope they don't use that data for training because then we are all doomedCan you imagine only training AI off of COD voice chat?

*shudders*

All it'll take is a couple thousand misfire bans and this whole thing will collapse like a house of cards.

Is this gonna end up like R6 Siege where people bait others into saying a slur?

Saw "Anyone remember the name of Eric Cartman's superhero persona?" all the time in chat.

Saw "Anyone remember the name of Eric Cartman's superhero persona?" all the time in chat.

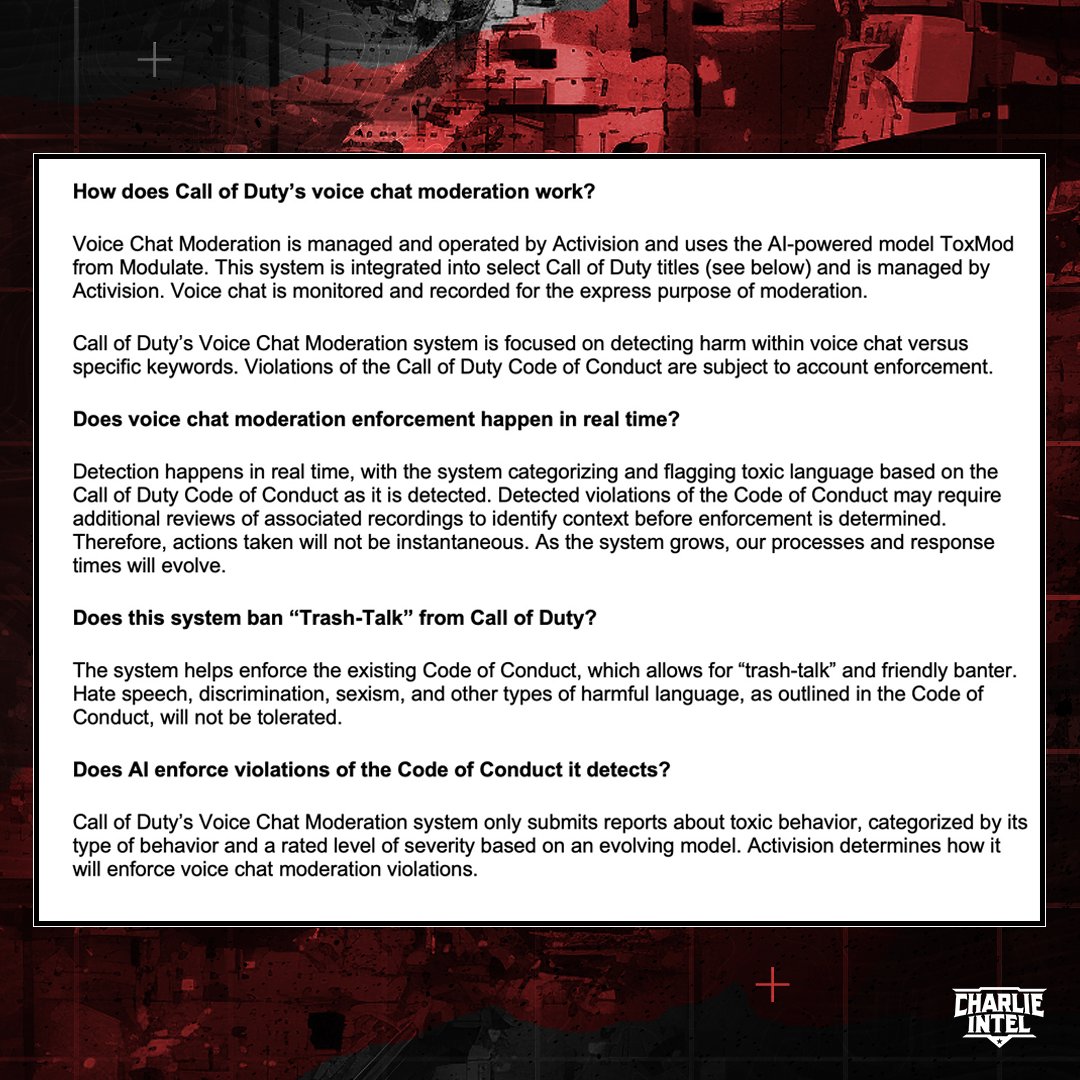

Why do people always assume these systems take automatic actions when they flat out say it's meant to auto flag usersAll it'll take is a couple thousand misfire bans and this whole thing will collapse like a house of cards.

How many players play and use this feature? That sounds like it could be a lot of processing; whose paying for that?

I can understand banning text and report features etc but active voice moderation as a whole can't be cheap and it's Activision right?

Cool tech otherwise.

I think reports go to people to check for context.

Which makes me feel like they are also paying people to do this and listen in to conversations for context?

I can understand banning text and report features etc but active voice moderation as a whole can't be cheap and it's Activision right?

Cool tech otherwise.

My only slight worry... is that how does it know if a person says: "Let's go kill that player", how does it know the difference between obviously talking about the game or an actual threat?

I think reports go to people to check for context.

Which makes me feel like they are also paying people to do this and listen in to conversations for context?

200 sps (slurs per second)Can you imagine only training AI off of COD voice chat?

*shudders*

If it's just flagging people and then a person is able to watch / listen that sounds like it could be good.

People don't talk in game because they're in party chats.this is why people dont talk in game chat anymore. I expect tons of bans of cod players lmfao

this is why people dont talk in game chat anymore. I expect tons of bans of cod players lmfao

People are in party chat or have others muted to avoid the people these AI thingies are meant to censor.

I'm pretty sure this AI only understands english and that's going to be a problem.

People speaking other than english might get false banned due to this AI.

Something like this can happen:

https://gamerant.com/apex-legends-japanese-players-ban-run-word/

Imagine if two Norwegian dudes are playing CoD and talking to each other. Then they say something that AI thinks is racist, even though is not. That could easily lead to false bans.

There's also chance that people with speech impediment might get false banned.

However it seems that current system is just flagging at the moment, but knowing activision they might eventually get rid of human moderators and automate their jobs with AI.

I don't know why people in this thread are saying "great" when the risk of players getting false banned is high.

People speaking other than english might get false banned due to this AI.

Something like this can happen:

https://gamerant.com/apex-legends-japanese-players-ban-run-word/

Imagine if two Norwegian dudes are playing CoD and talking to each other. Then they say something that AI thinks is racist, even though is not. That could easily lead to false bans.

There's also chance that people with speech impediment might get false banned.

However it seems that current system is just flagging at the moment, but knowing activision they might eventually get rid of human moderators and automate their jobs with AI.

I don't know why people in this thread are saying "great" when the risk of players getting false banned is high.

"……now it's a ghost town"

Will be so accurate now

Also I don't think I have spoken in a public lobby in a game in over a decade? Always in party chat instead, even when playing solo

Will be so accurate now

Also I don't think I have spoken in a public lobby in a game in over a decade? Always in party chat instead, even when playing solo

It would be way worse than that.

Let me guess it wont detect languages and spanish speakers will be banned for saying the word black

"Listen here you faintcolt ****, you are now banned so stick your ******** **** in a blender, now **** ***. Have a lovely day, heil *****"

At first I was thinking of swearing and trash talk and was very confused. Like why bother in an M rated graphic military shooter. Meanwhile they sell mega bloks and still claim they don't want their games played by kids.

But hate speech makes sense.

If only it would work properly.

And I am wary of this type of thing in general anyway.

But hate speech makes sense.

If only it would work properly.

And I am wary of this type of thing in general anyway.

The complete meltdowns on twitter from people saying the game will die because they can't say the N word anymore make me happy

How exactly would you have human moderators listening in on every game?

There's no need to speculate. The site for the service they use literally lists all the languages it supports/partially supportsI'm pretty sure this AI only understands english and that's going to be a problem.

People speaking other than english might get false banned due to this AI.

Something like this can happen:

https://gamerant.com/apex-legends-japanese-players-ban-run-word/

Imagine if two Norwegian dudes are playing CoD and talking to each other. Then they say something that AI thinks is racist, even though is not. That could easily lead to false bans.

There's also chance that people with speech impediment might get false banned.

However it seems that current system is just flagging at the moment, but knowing activision they might eventually get rid of human moderators and automate their jobs with AI.

I don't know why people in this thread are saying "great" when the risk of players getting false banned is high.

Modulate

www.modulate.ai

Seems most are in beta (Spanish) or Alpha (everything non-English or Spanish)

How exactly would you have human moderators listening in on every game?

Of course you wouldn't - I was kidding!

This is going to backfire in massive ways.

The AI will false ban people, and people are going to leave the game and go to something else.

The AI will false ban people, and people are going to leave the game and go to something else.

They definitely should use it for training just not for content generation. What better source would you have to detect toxic behaviorLol, I hope they don't use that data for training because then we are all doomed