Battlefield V PC performance thread

- Thread starter GrrImAFridge

- Start date

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Which 2070 do you have If you don't mind me asking? Gonna get a 2070 come February and I'm trying to gauge which one is the best of the ones available.

i grabbed the EVGA GeForce RTX 2070 XC Gaming, 08G-P4-2172-KR

its the one thats $549, i upgraded from a 970 evga and im shocked how quiet it is compared...the fans even under load are pretty quiet

They both had the same brightness settings... PC uses different gamma...They couldn't match the brightness between the two so really hard to tell. You really need everything equalized for a comparison like that.

EVGA 1080ti SC2 - Intel i7 8700k - 32GB DDR4/3600Mhz - Samsung 960 SSD - Windows 10 (Build: 1803)

=========

Display: 4K 10-bit WCG HDR TV (3840x2160)

Rendering Resolution: 1728p - 1800p (100% Scale)

API: DX11

HDR? Off.

Driver Version: 416.81

Display Mode: Fullscreen

V-sync: Yes

Field-of-View (FOV): Default (55 Vertical / 70 Horizontal)

FPS Target: 60 FPS (Capped via In-game Settings)

Anti-Aliasing Mode: TAA High

Graphics Settings: Everything set to Ultra, except Lighting (High).

Film Effects: All Enabled. (Grain, Chromatic Abberation, Lens Effects, etc...)

Room for ReShade overhead? (Doubtful)

Results/Notes: Well when I first started the game, they had me at native 4K and everything set to Ultra but during the prologue mission my FPS bounced between 46 and 38 FPS. Big yikes, but not unexpected with everything set to Ultra. (4K/60 is just a dream after all.) Due to the competitive MP, this isn't really a game where you can just settle for a 30FPS lock and call it a day, I had to get stable 60FPS. To do this I first lowered rendering resolution to 1800p. As a result my FPS during the prologue mission averaged 51-52 FPS. Next I dropped to 1728p and ticked TAA down to low, this put me at stable 60 throughout the prologue but I wasn't happy with low-quality TAA. Next I bumped TAA back up to high but dropped Lighting down to "High" because I noticed that they bundled Lighting and Shadows together (which I am not a fan of btw) and I always lower my shadows to high in these modern AAA games. At 1728p and this one setting dropped a level, I found my locked 60FPS for the entire prologue mission. Just to see if I could raise resolution a notch I tried once more and went to 1800p and found that 98% of the time I was maintaining 60 FPS with the occasional drop to 59.

It seems I will have to test more at 1800p but I think it's likely I end up settling on 1728p. My desire was to play the game at it's absolute visual best while also maintaining a competitive 60FPS. With these settings I believe I will be able to fulfill this desire. It will be interesting to monitor patch progress and see if graphics can be improved further if they attempt performance enhancements.

I'd like to note that unlike Shadow of the Tomb Raider (DX12 Mode) or AC Odyssey I do not appear to be CPU choked here, my highest CPU utilization during all of this was 76%. My GPU was at times 100% utilized, but averages at 90%+ during movement.

=========

Display: 4K 10-bit WCG HDR TV (3840x2160)

Rendering Resolution: 1728p - 1800p (100% Scale)

API: DX11

HDR? Off.

Driver Version: 416.81

Display Mode: Fullscreen

V-sync: Yes

Field-of-View (FOV): Default (55 Vertical / 70 Horizontal)

FPS Target: 60 FPS (Capped via In-game Settings)

Anti-Aliasing Mode: TAA High

Graphics Settings: Everything set to Ultra, except Lighting (High).

Film Effects: All Enabled. (Grain, Chromatic Abberation, Lens Effects, etc...)

Room for ReShade overhead? (Doubtful)

Results/Notes: Well when I first started the game, they had me at native 4K and everything set to Ultra but during the prologue mission my FPS bounced between 46 and 38 FPS. Big yikes, but not unexpected with everything set to Ultra. (4K/60 is just a dream after all.) Due to the competitive MP, this isn't really a game where you can just settle for a 30FPS lock and call it a day, I had to get stable 60FPS. To do this I first lowered rendering resolution to 1800p. As a result my FPS during the prologue mission averaged 51-52 FPS. Next I dropped to 1728p and ticked TAA down to low, this put me at stable 60 throughout the prologue but I wasn't happy with low-quality TAA. Next I bumped TAA back up to high but dropped Lighting down to "High" because I noticed that they bundled Lighting and Shadows together (which I am not a fan of btw) and I always lower my shadows to high in these modern AAA games. At 1728p and this one setting dropped a level, I found my locked 60FPS for the entire prologue mission. Just to see if I could raise resolution a notch I tried once more and went to 1800p and found that 98% of the time I was maintaining 60 FPS with the occasional drop to 59.

It seems I will have to test more at 1800p but I think it's likely I end up settling on 1728p. My desire was to play the game at it's absolute visual best while also maintaining a competitive 60FPS. With these settings I believe I will be able to fulfill this desire. It will be interesting to monitor patch progress and see if graphics can be improved further if they attempt performance enhancements.

I'd like to note that unlike Shadow of the Tomb Raider (DX12 Mode) or AC Odyssey I do not appear to be CPU choked here, my highest CPU utilization during all of this was 76%. My GPU was at times 100% utilized, but averages at 90%+ during movement.

Last edited:

Runs flawlessly for me, 1440p60 all Ultra, nice work DICE

i5 6600K at 4.4 GHz + GTX 1070

Give me your secret sauce. I have the identical setup and I wouldn't even call it close to flawless at 1080p let alone 1440p.

Give me your secret sauce. I have the identical setup and I wouldn't even call it close to flawless at 1080p let alone 1440p.

dropping frequently below 60 is flawless for some people. they consider hitting 60 sometimes to be 60 fps

Give me your secret sauce. I have the identical setup and I wouldn't even call it close to flawless at 1080p let alone 1440p.

No idea. GSync monitor, 16 GB of DDR4, and it's installed on an SSD. Drop the FPS limit to 60 in the game as keeping it at 144 makes the CPU usage lock at 100% and then I get occasional stutters, although lots of time my FPS is in the low 70s.

dropping frequently below 60 is flawless for some people. they consider hitting 60 sometimes to be 60 fps

Lol, I use Afterburner to monitor my performance enough to know it's stable. Whatever few drop to 55 I get are not noticeable thanks to GSync.

I bet they mostly play 32p/Frontlines games. FPS is a lot better there. The i5 6600K is quite a bit faster at multi core stuff than the i5 I had so maybe that is the reason.Give me your secret sauce. I have the identical setup and I wouldn't even call it close to flawless at 1080p let alone 1440p.

I have an i7 2700k and a 980 ti and its really inconsistent for me.Anyone running anything like a i7 2600k and 970 to post what performance is like?

I still need to play around with the settings, but I get between 45-65fps at 3440x1440 with most settings (I've tried Ultra and Low and for some reason it's only a 10fps difference, so something is definitely wrong on my end).

The Beta gave me around 60fps at 1920x1080 at ultra, so you should be fine with the 2600k and the 970.

No idea. GSync monitor, 16 GB of DDR4, and it's installed on an SSD. Drop the FPS limit to 60 in the game as keeping it at 144 makes the CPU usage lock at 100% and then I get occasional stutters, although lots of time my FPS is in the low 70s.

Lol, I use Afterburner to monitor my performance enough to know it's stable. Whatever few drop to 55 I get are not noticeable thanks to GSync.

then you arent playing conquest/grand operations 64 player

Turns out I did have vsync on all this time lol.

FPS fluctuates between 75-90 with everything set to ultra at 4k.

2080 Ti FE

8700k

16gb ram @3000mhz

(oh this is on 64 player Conquest)

FPS fluctuates between 75-90 with everything set to ultra at 4k.

2080 Ti FE

8700k

16gb ram @3000mhz

(oh this is on 64 player Conquest)

0 for 2 for you then lol

That's all I play. Dunno what about this is so difficult for you.

I can't seem to get a solid 60 without dipping down to medium settings. Pretty disappointing from my rig, I feel like I'm missing a crucial setting somewhere.

6500k

GeForce 1080

32gb ram

Samsung 940nvme drive

Constant dips to 40 on high at 3400x1440

6500k

GeForce 1080

32gb ram

Samsung 940nvme drive

Constant dips to 40 on high at 3400x1440

Is rotterdam glitched on Grand Ops? Seems to go on forever after the city gets bombed and no team can win lol

If I quit do I lose out on all the upgrades and rank ups I earned this match?

If I quit do I lose out on all the upgrades and rank ups I earned this match?

I'm a bit bummed my game can't play a smooth 60 fps with my i5 4670k. Just watched some Xbox one x gameplay and it looks like such a better experience than I'm having. I get bad framerates and some stuttering. Don't see any of that in the xbox footage. Shouldn't my cpu still be good enough to match up? Plus my GPU blows the xbox one x away so I feel it should help the performance more than it does.

Just idk what the difference is and maybe they just optimized harder on the console version compared to PC? People are know to just but more expensive PC parts to brute force performance so maybe that drives them to not worry about it too much.

Just idk what the difference is and maybe they just optimized harder on the console version compared to PC? People are know to just but more expensive PC parts to brute force performance so maybe that drives them to not worry about it too much.

I'm a bit bummed my game can't play a smooth 60 fps with my i5 4670k. Just watched some Xbox one x gameplay and it looks like such a better experience than I'm having. I get bad framerates and some stuttering. Don't see any of that in the xbox footage. Shouldn't my cpu still be good enough to match up? Plus my GPU blows the xbox one x away so I feel it should help the performance more than it does.

Just idk what the difference is and maybe they just optimized harder on the console version compared to PC? People are know to just but more expensive PC parts to brute force performance so maybe that drives them to not worry about it too much.

whats your gpu and which X footage are you referring to. but yes generally consoles achieve much better cpu efficiency than pcs. especially under dx11

I have an i7-6700k and from what I can see it's definitely taking more cores/threads. Well, looks like there's a game that definitely benefits from more than 4 cores now.

I had hyperthreading off for a while because Destiny 2 performs better with it disabled for me, and when I tried the BFV beta it ran like garbage. 40 fps often. Turning hyperthreading back on nearly doubled my FPS. Game really loves threads.

Ok, that's cool. Glad BFV seems to love HT at least.I had hyperthreading off for a while because Destiny 2 performs better with it disabled for me, and when I tried the BFV beta it ran like garbage. 40 fps often. Turning hyperthreading back on nearly doubled my FPS. Game really loves threads.

Slight exageration on nearly double, minimum fps increased about 50%. Mid 30s to high 50s. Average above ~70fps, while without HT it was probably around 45. My i7 is from the Lynnfield line. Very very old.

This was entirely CPU bound as well, since I played on minimum settings GPU at ~50% usage.

This is my system.

Ryzen 5 2600X

Strix Vega 64 (A goddamn furnace)

16 gig of RAM

3400 x 1400 Freesync Ultrawide

I've only played a few single players missions so far. But, performance is great! I'm happy with locking it to 60 and it pretty much stays there. Minimal dips into the 50s that I don't notice due to freesync doing its job. I think we can notch this one up as a rare win for AMD cards.

Ryzen 5 2600X

Strix Vega 64 (A goddamn furnace)

16 gig of RAM

3400 x 1400 Freesync Ultrawide

I've only played a few single players missions so far. But, performance is great! I'm happy with locking it to 60 and it pretty much stays there. Minimal dips into the 50s that I don't notice due to freesync doing its job. I think we can notch this one up as a rare win for AMD cards.

I have the same CPU and a RX 480 @1440p. You'll be fine in MP too (never drops below 70-80 on everything high). The 2600 X and non X are awesome for that price. What temperatures do you get on the Vega 64 though?This is my system.

Ryzen 5 2600X

Strix Vega 64 (A goddamn furnace)

16 gig of RAM

3400 x 1400 Freesync Ultrawide

I've only played a few single players missions so far. But, performance is great! I'm happy with locking it to 60 and it pretty much stays there. Minimal dips into the 50s that I don't notice due to freesync doing its job. I think we can notch this one up as a rare win for AMD cards.

This is my system.

Ryzen 5 2600X

Strix Vega 64 (A goddamn furnace)

16 gig of RAM

3400 x 1400 Freesync Ultrawide

I've only played a few single players missions so far. But, performance is great! I'm happy with locking it to 60 and it pretty much stays there. Minimal dips into the 50s that I don't notice due to freesync doing its job. I think we can notch this one up as a rare win for AMD cards.

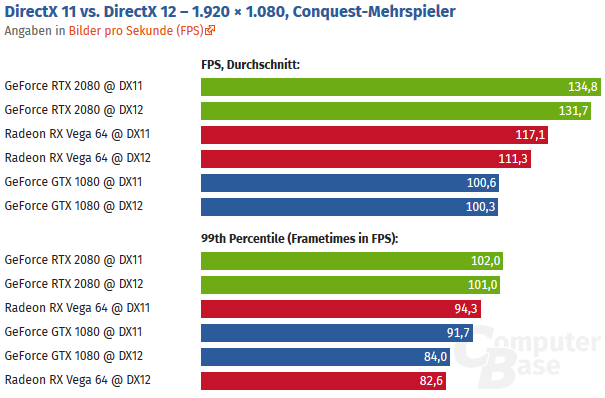

vega 64 performs just under a 2080 in this title

I'm a bit bummed my game can't play a smooth 60 fps with my i5 4670k. Just watched some Xbox one x gameplay and it looks like such a better experience than I'm having. I get bad framerates and some stuttering. Don't see any of that in the xbox footage. Shouldn't my cpu still be good enough to match up? Plus my GPU blows the xbox one x away so I feel it should help the performance more than it does.

Just idk what the difference is and maybe they just optimized harder on the console version compared to PC? People are know to just but more expensive PC parts to brute force performance so maybe that drives them to not worry about it too much.

It's pretty wild that consoles with essentially bad laptop CPUs can run this engine well but much more powerful desktop CPUs have a lot of trouble

I only have the non-K version of the 6600 paired with an R9 390. I'll probably won't enjoy this as well.i5 6600k

GTX 970

16 GB of Ram

I can run Medium on all settings but the Frame Rate varies to 45-70 FPS :(

My i5 is definitely showing it's age now.

im not an AMD aficionado but id love to see pcs start to approach the efficiency level of consoles regardless of gpu vendor so im still for properly implemented low level apis. id tech 6 is a good example

The thing is -- and this is what I always said about low level APIs -- even when done very well what will happen is that it will be efficient on one or two GPU architectures.im not an AMD aficionado but id love to see pcs start to approach the efficiency level of consoles regardless of gpu vendor so im still for properly implemented low level apis. id tech 6 is a good example

A well-made game on a higher level API, with well-made driver support, can run just a bit less efficiently, but on tons of architectures, including ones that potentially aren't out at the time when the game is released.

In a way that might make the GPU market harder for a new/underdog player to be competitive in.

The thing is -- and this is what I always said about low level APIs -- even when done very well what will happen is that it will be efficient on one or two GPU architectures.

A well-made game on a higher level API, with well-made driver support, can run just a bit less efficiently, but on tons of architectures, including ones that potentially aren't out at the time when the game is released.

In a way that might make the GPU market harder for a new/underdog player to be competitive in.

theres only ever realistically going to be 2 architectures any way. and i cant say for sure whether its strictly due to the api, but their engine certainly seems rather far ahead in the pc space in terms of performance. frostbite comes the closest but nothing much else

I have the same CPU and a RX 480 @1440p. You'll be fine in MP too (never drops below 70-80 on everything high). The 2600 X and non X are awesome for that price. What temperatures do you get on the Vega 64 though?

My Vega has fairly decent undervolt, but it can still hit 80-83 degrees when it's maxed for a period of time. That's not the worst of it. VR SOC can get to 115 degrees under load...yes.

Apparently it's due to absolutely atrocious quality control on Asus' part, the thermal pads don't make any kind of proper connection. I'm waiting on replacement pads/paste in the mail. People on reddit have managed to drop GPU Temp by 8-9 degrees and VOC by 30. Just by fixing Asus' truly atrocious QC. I can't believe I have to do this on a card I paid $750 AU for, but here we are. I'm very tempted to sell and pick up a 2080. But, freesync is really important to me and I'm not keen on spending up big on a GSYNC screen.

This is the video I watched. I would love for my game to run that consistently. Even if I turn all settings to low and resolution scale all the way to 50% of 1080p and it looks terrible, it still runs about the same. Just completely CPU bound. Guess the game really just needs more cores/threads even if they are alot slower. That console footage is a dream compared fo when i play, even if i can make the graphics look a little better on the PC.whats your gpu and which X footage are you referring to. but yes generally consoles achieve much better cpu efficiency than pcs. especially under dx11

https://youtu.be/D23lqQYnCKg

My GPU is a GTX 1080. I've always been aware I'll be CPU bound since I upgraded to a 1080 2 years ago but no other game is really a problem since I only play at 1080p 60fps with sometimes using DSR. Battlefield is basically the only thing that ruins my performance to where 1080p 60fps isn't achievable. Except for something like Planet Coaster with tons of stuff in the level. But I don't feel bad when that game runs slower of course.

This is the video I watched. I would love for my game to run that consistently. Even if I turn all settings to low and resolution scale all the way to 50% of 1080p and it looks terrible, it still runs about the same. Just completely CPU bound. Guess the game really just needs more cores/threads even if they are alot slower. That console footage is a dream compared fo when i play, even if i can make the graphics look a little better on the PC.

https://youtu.be/D23lqQYnCKg

My GPU is a GTX 1080. I've always been aware I'll be CPU bound since I upgraded to a 1080 2 years ago but no other game is really a problem since I only play at 1080p 60fps with sometimes using DSR. Battlefield is basically the only thing that ruins my performance to where 1080p 60fps isn't achievable. Except for something like Planet Coaster with tons of stuff in the level. But I don't feel bad when that game runs slower of course.

gtx1080 for 1080p 60fps? oooof!

We need to get GameGPU some RTX 2000 series cards.

The same for me. It is, most probably, caused by Future Frame Rendering = On

Deactivating it at DX11 will massively drop GPU load and the game starts to hit below 60 fps for me (vs 80-140).

Deactivating it at DX12 doesn't introduce the GPU load stuck at 70% problem, but it stutters like crazy.

Fixed it. What I did is install my Logitech mouse software and set the report rate to max (1000). Now the game is perfectly smooth.

Hope it will help you.

Runs flawlessly for me, 1440p60 all Ultra, nice work DICE

i5 6600K at 4.4 GHz + GTX 1070

Same, except at 1080p.

dropping frequently below 60 is flawless for some people. they consider hitting 60 sometimes to be 60 fps

I bet they mostly play 32p/Frontlines games. FPS is a lot better there. The i5 6600K is quite a bit faster at multi core stuff than the i5 I had so maybe that is the reason.

I also have flawless performance on my i5-6600k at 4.3 on 64 player servers, max detail (with Shadowplay recording simultaneously). Also have Afterburner running usually. I think there's a few people here with problems with their rigs. Might be software misconfiguration, slow RAM or something.

tried everything I could on my end, game is a 60-80fps game in MP during combat at 1440p with 105% resolution scaling and ultra settings and plenty of stutters. This is with an RTX 2080 and i7-6700k OC'd at 4.5. fps is largely the same whether its 100% scaling or 130% scaling

not sure what is going on but thats definitely disappointing, but it seems like this is a problem exclusive to BFV. Game is CPU heavy but according to benchmarks I should be doing better here for sure

not sure what is going on but thats definitely disappointing, but it seems like this is a problem exclusive to BFV. Game is CPU heavy but according to benchmarks I should be doing better here for sure

Last edited:

I'm surprised that I'm actually getting better performance in this than in BF1.

I turned some settings down to medium and I'm maintaining 120+ FPS pretty much all the time, in less busy areas of maps I'm often close to or at my cap of 162 FPS as well. This is in the infantry modes.

In CQ I don't hit that cap all that often, but still maintain 120+ FPS most of the time.

Playing at 1440p with a 1080Ti and i7 5820K@4GHz, 16GB RAM.

I turned some settings down to medium and I'm maintaining 120+ FPS pretty much all the time, in less busy areas of maps I'm often close to or at my cap of 162 FPS as well. This is in the infantry modes.

In CQ I don't hit that cap all that often, but still maintain 120+ FPS most of the time.

Playing at 1440p with a 1080Ti and i7 5820K@4GHz, 16GB RAM.

Yeah screw DX12 and Vulkan, let's stay on DX11 another 7 years. Very forward thinking...

The consoles can't keep 60fps either. Even the X.It's pretty wild that consoles with essentially bad laptop CPUs can run this engine well but much more powerful desktop CPUs have a lot of trouble

There are at least 5 GPU architectures currently in use by PC gamers.theres only ever realistically going to be 2 architectures any way.

I'm simply pointing out that having games optimize for a very specific GPU architecture (or a small number of architectures) is absolutely not something that is exclusively advantageous on PC.Yeah screw DX12 and Vulkan, let's stay on DX11 another 7 years. Very forward thinking...

Hardware abstraction is something that exists for a reason, and moving to higher or lower levels of abstraction introduces a complex set of tradeoffs. I miss that aspect in the general discussion of it.

the gameplay I see in this video:

https://www.youtube.com/watch?v=D23lqQYnCKg

looks way smoother. Even when I implement a 60fps cap or run my monitor at 60fps and vsync, I can't get rid of the random hitching/stutters

Same, except at 1080p.

I also have flawless performance on my i5-6600k at 4.3 on 64 player servers, max detail (with Shadowplay recording simultaneously). Also have Afterburner running usually. I think there's a few people here with problems with their rigs. Might be software misconfiguration, slow RAM or something.

a 1070 isnt enough for a solid 60 @1440p/ultra based on the benches ive seen and how my own titan xp performs

There are at least 5 GPU architectures currently in use by PC gamers.

I'm simply pointing out that having games optimize for a very specific GPU architecture (or a small number of architectures) is absolutely not something that is exclusively advantageous on PC.

Hardware abstraction is something that exists for a reason, and moving to higher or lower levels of abstraction introduces a complex set of tradeoffs. I miss that aspect in the general discussion of it.

Are gcn variants not similar enough to be counted all as one? And maxwell/pascal the same? Turing seems to do fine as well benching previous games that were never designed with it in mind

Last edited:

Maybe BF V has the same issue that I once had with BF 1 and Battlefront 2 where I needed to disable Windows 10's fullscreen optimization on the game's exe. Without doing that, I was getting insane stuttering and hitching.the gameplay I see in this video:

https://www.youtube.com/watch?v=D23lqQYnCKg

looks way smoother. Even when I implement a 60fps cap or run my monitor at 60fps and vsync, I can't get rid of the random hitching/stutters

Went ahead and performed a mild overclock (~4.4ghz) on my 6600k and I now seem to hold at least 60fps during a couple hours of play. So it's worth doing if anyone else is in a similar situation.

the gameplay I see in this video:

https://www.youtube.com/watch?v=D23lqQYnCKg

looks way smoother. Even when I implement a 60fps cap or run my monitor at 60fps and vsync, I can't get rid of the random hitching/stutters

the LOD is dramatically worse than pc version at ultra. that will help cpu side performance a lot.