Hi Folks, was hoping for a little bit of advice.

I got myself a Retrotink 5x about a month ago and just got round to playing about with it now. I've been using it on an LG C3, which is also new to me.

I plugged my N64 into it and was playing about with the various resolution options. One thing that I noticed was that the scaling up to 1080p (IMO) looked better than scaling it up to 1440p (There was also a second issue that I'll speak about in a moment). I was just wondering if this was "normal" behaviour?

The TV is capable of outputting 4k, so it certainly can display a 1440p signal. However, am I correct in thinking that because 1440p can not be integer scaled to 4k (4k is 1.5x 1440p), this is the reason why it looks "blurry"? Since 1080p can be integer scaled up to 4k, and so it remains crisp, right?

Is there any way to get the C3 to display a 1440p signal without blur? And even if there is, would it just be a fruitless endeavour since the int-scaled 1080p signal is pretty much what I want anyway? Would appreciate some thoughts/guidance on this.

I don't think the C3 uses integer scaling even with 1080p, though I can't say for sure. I know for certain that the C9 doesn't, but I'm not sure how much the scaling has changed for subsequent models. As for why 1080 still look better even if it's not integer scaled, maybe it's because less interpolation is needed for it? I'm really just guessing on that one.

If you are using CRT masks, however, 1440p does still has an advantage over 1080p since the masks can be a lot finer and more detailed. Otherwise, I'd just stick with 1080p.

The second issue (Which depending on the answer to the first issue may end up not being a problem at all) was that when I ran the tink at 1440p resolution, I noticed the frame rate dropped pretty substantially.

Now, just as caveats to this I haven't yet updated the firmware of the Tink (just out of the box firmware right now) and I was also using a "standard" HDMI cable. Would either of these be responsible? I don't really know all that much about HDMI cables other than that there are multiple revisions for higher bandwith, and I'm pretty sure I don't have any of those lying around right now.

The cable shouldn't have anything to do with the softness, but I'm not sure about the framerate changing. One thing that doesn't help is that 1440p doesn't always play well with certain TVs, which is why there are two different 1440p options on the 5X. Which 1440p mode did you select? What vertical sync setting did you use? For which system or systems has this happened?

Finally, I saw that with updated firmware I can have the tink output 4k, but at 25fps. Frame-rate is a big deal for me, so I just wanted to get some opinions as to whether or not its worth using this mode? Obivously quite a few (PAL) N64 games run at sub 25fps anyway, but for pretty much every other console the games should be at least 30, so I'm not sure I really want to take the hit on that one (Especially if as I mentioned before it would end up looking pretty much the same as the 1080p signal)

Thanks for your help :)

I should tell you that the 4K option was removed in a firmware update some time back. If you upgrade to the latest version, you'll be maxing out at 1440p. You can upgrade and downgrade to any firmware revision freely, so don't worry about changing your mind if you upgrade to the latest version.

It's not a major loss anyway. Since the refresh rate is halved, motion tends to look horrible with it. If you're playing a very slow paced game without a lot of movement, then it might be tolerable, but otherwise it's just not worth it. The limited utility of the 4K mode on the 5X was why it was eventually removed.

Maybe the scanline filter is a good idea, Ill try it out. Weirdly enough I can't normally get my head to sit right with them. Although I grew up on CRTs from anything between 13-20 inch, I feel like I always remember games through rose-tinted glasses. I don't really remember the scanlines or how they looked and whenever I see the filters now I feel it darkens the image too much. Oh well.

All in all it doesn't matter so much, I felt the 1080p image looked nice and crisp anyway so if I have to stick with that, so be it.

Scanlines on a real CRT could vary significantly in conspicuousness based on different factors like the specific tube used or how the display was adjusted. Older consumer sets tended to show them the least, while newer sets showed them more, and high resolution pro monitors like the PVMs and BVMs had the thickest scanlines of all. The same goes for the look of the tiny red, green, and blue phosphors that make up the mask or grille under the tube's glass.

Now in terms of brightness, real CRTs could be very bright even with scanlines. While modern displays can get far brighter, they tend to falter when you try to imitate that CRT look. Fake scanlines alone can virtually halve the brightness depending on how strong they are. It gets worse when you add a CRT mask on top of it and worse still with BFI in the mix.

Fortunately, there are some ways to mitigate the drop in brightness. The first is to experiment with the scanline settings as well as the gamma and color boost settings in the 5X's Post-Processing menu. This has some limitations though. With the former, ruducing the scanline effects lessens the CRT look and with the latter, extreme settings can distort the color or cause clipping.

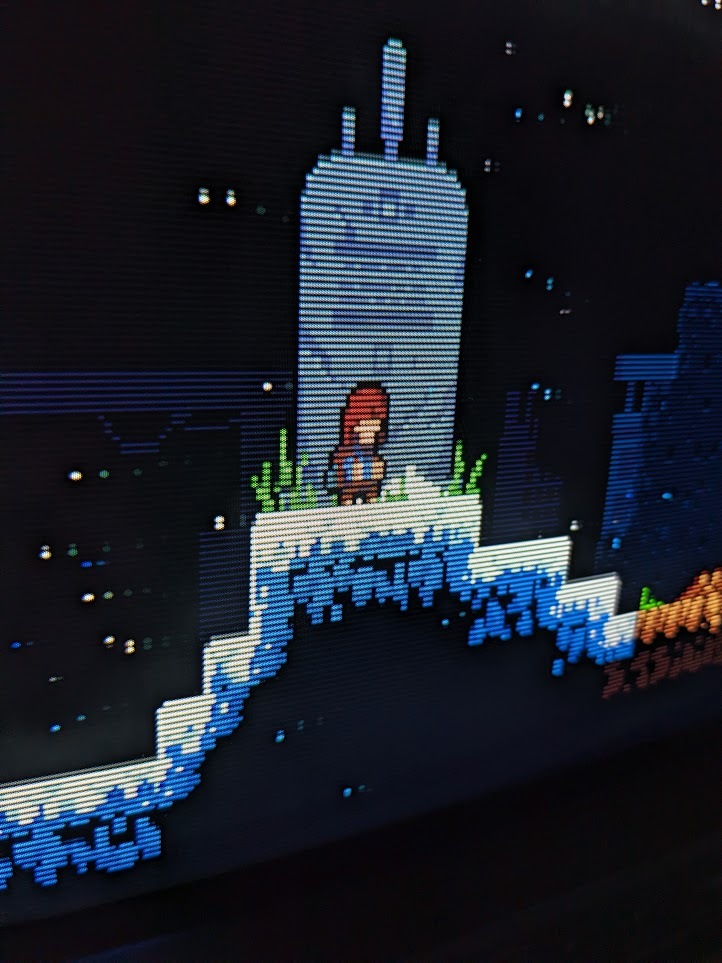

The next thing you can do is turn up the peak luminace setting on the display itself to compensate (this is would be the OLED Light setting on LGs). This is great if your TV has enough headroom, but that isn't always the case. Further, most TVs limit their maximum brightness in SDR. The good news is the 5X has an HDR mode you can activate to get the maximum brightness out of the TV. The bad news is the 5X HDR implementation is very flawed due to limitations of the hardware, so certain colors can get distorted. I actually posted some pictures demonstrating this a little earlier in this thread if you'd like to see some examples.

The only other options are upgrading to the RT4K (very expensive) or using emulation on a PC (defying the point of using original hardware), though both of which at least have proper HDR support. I'm sorry to say there's no easy way around the brightness limitation with the RT5X, so you have to pick your poison should you want to emulate the look of a CRT.